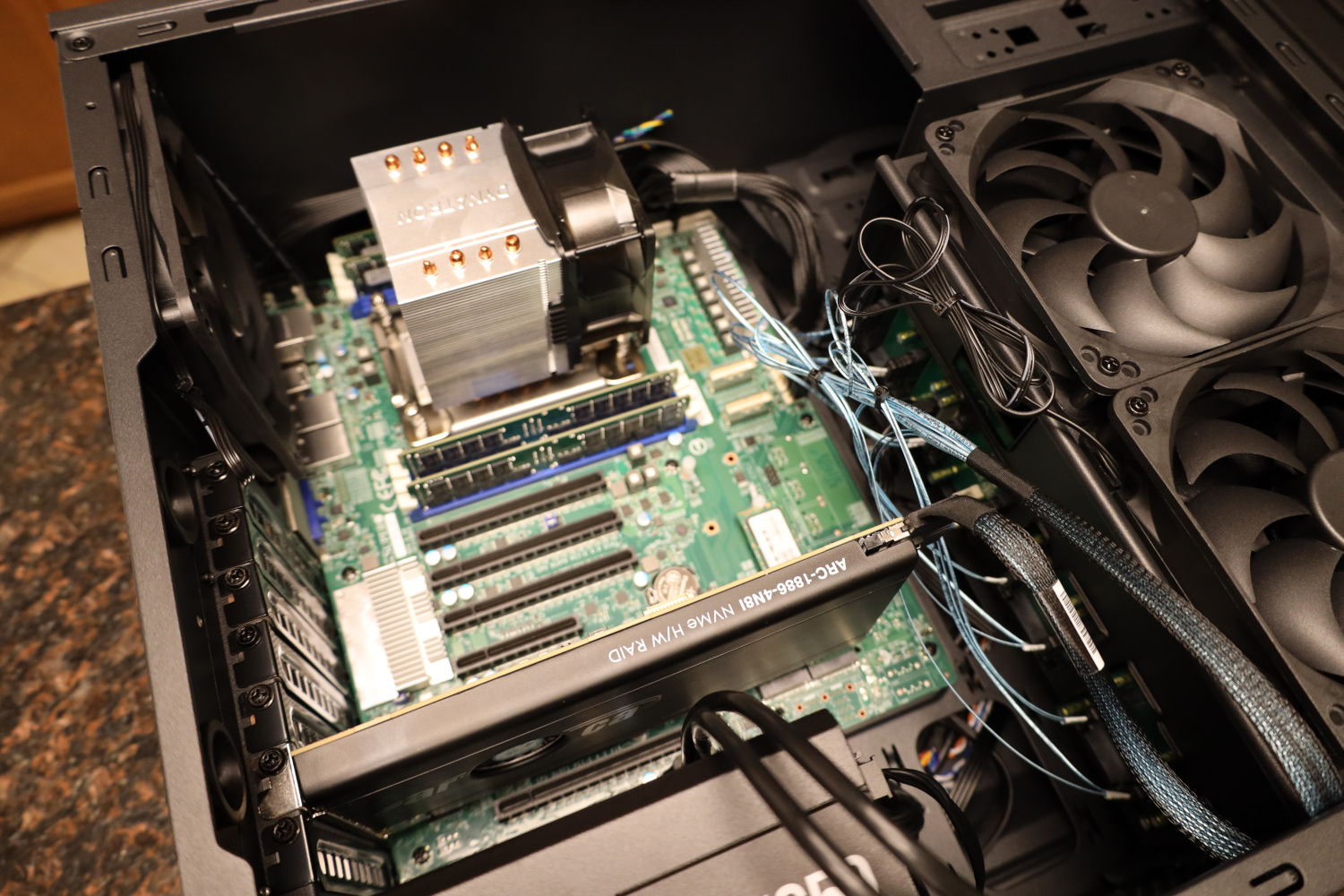

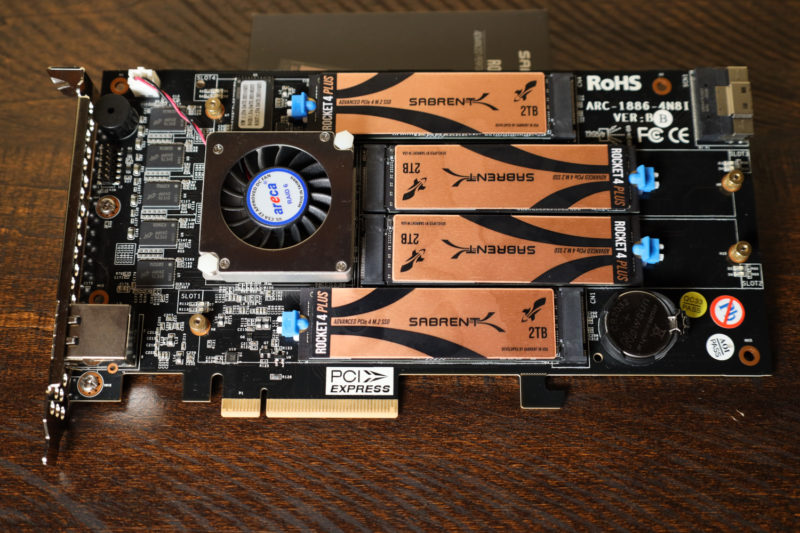

Today I am taking a look at the Areca ARC-1886-4N8i PCIe Gen4 M.2 RAID card. This is a full-height PCIe 4.0 x8 card that is home to 4x PCIe 4.0 M.2-2280 ports, along with a SlimSAS 8i (SFF-8654) connector that can connect up to either 8 SATA/SAS drives, 2 more NVMe drives, or a SAS Expander/NVMe switch for even more drives. The ARC-1886-4N8i is a true hardware RAID card with onboard cache, and it supports RAID 0, 1, 3, 5, 6, and 10. This is the second in my series on PCIe M.2 RAID cards, the first was the HighPoint SSD7540. Once again, I am grateful to Sabrent for providing their Rocket 4 Plus 2TB drives for this series. This card is a very different beast than the SSD7540, and I am excited to get to it.

Areca ARC-1886-4N8i

The ARC-1886-4N8i makes an odd first impression. The plastic shroud has a decidedly ‘cheap’ feel to it for such an expensive piece of kit. That feeling is deceiving, though, as I would soon learn.

The plastic shroud on the front of the ARC-1886-4N8i makes the card significantly safer to pick up and handle while leaving a cutout for the fan and NIC. More on those later. For now, I am going to open it up.

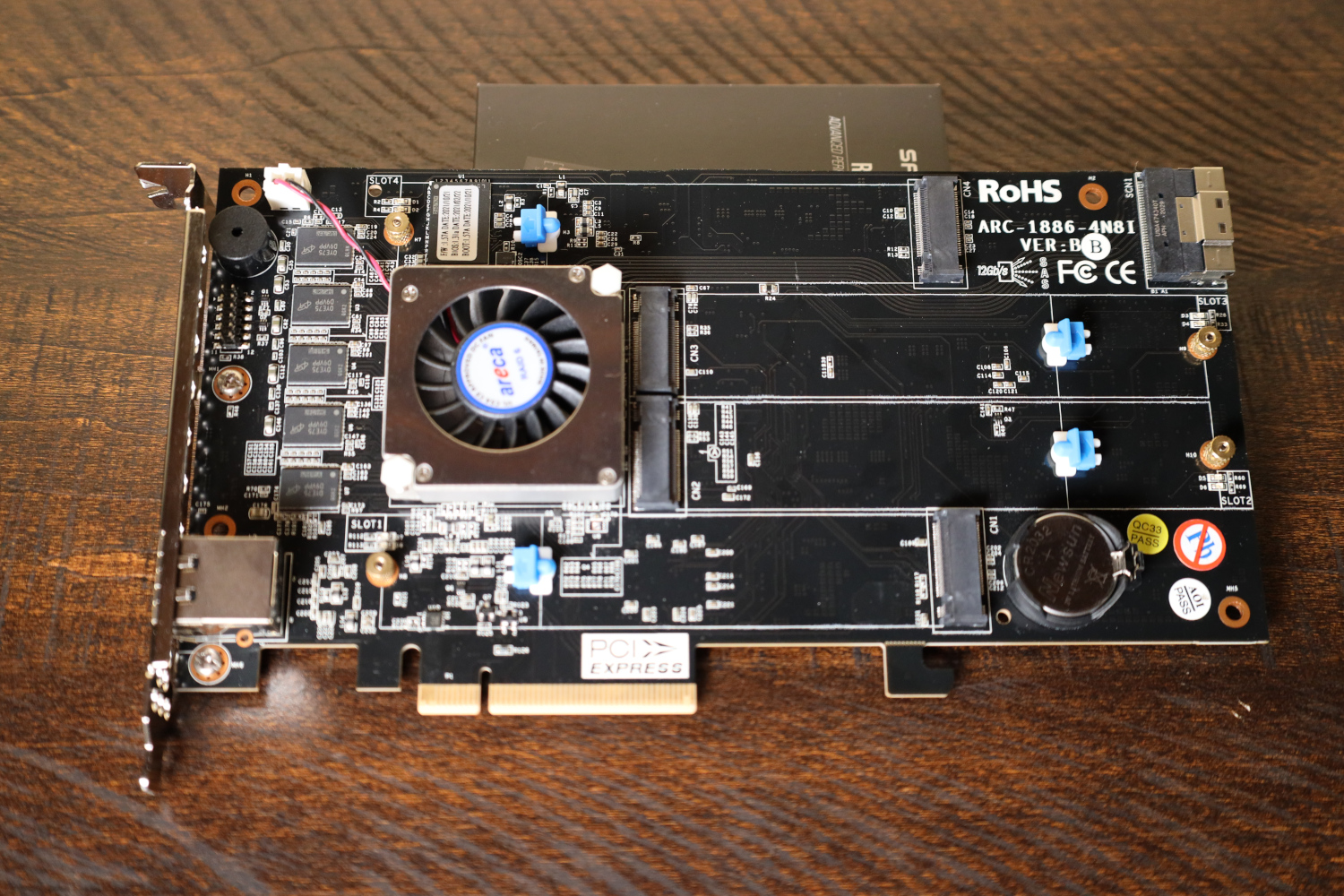

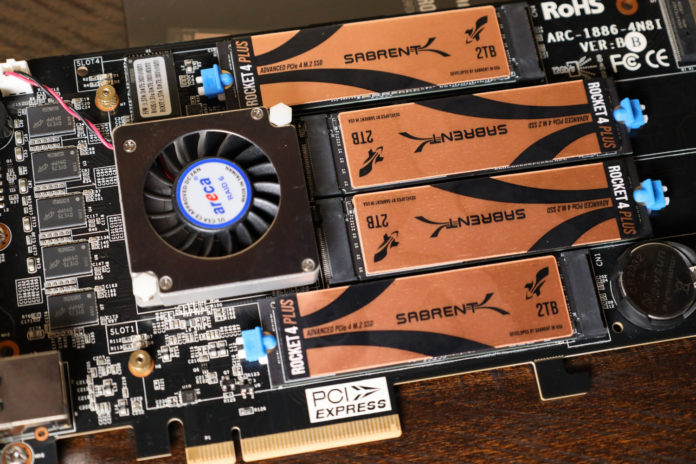

With the front shroud removed, we can see what we are working with. 4 M.2 ports are present, supporting up to 22110-sized drives. Tool-less retention clips are installed in position for 2280 drives, which I used. Also on the front of the card are a line of DRAM chips along the left, a buzzer, and the SFF-8654 connector in the top right. Beneath the fan is the ROC controller, and Areca uses the Broadcom SAS3916 ROC for this card.

The underside of the shroud reveals its method of operation. The fan on the card operates as a blower, with plastic air channels in the shroud directing air over all four M.2 SSDs and then exiting out the rear of the card. This allows fairly effective airflow to the internal M.2 SSDs, and there is even enough room inside the shroud to allow for a low-profile heatsink on each drive if necessary.

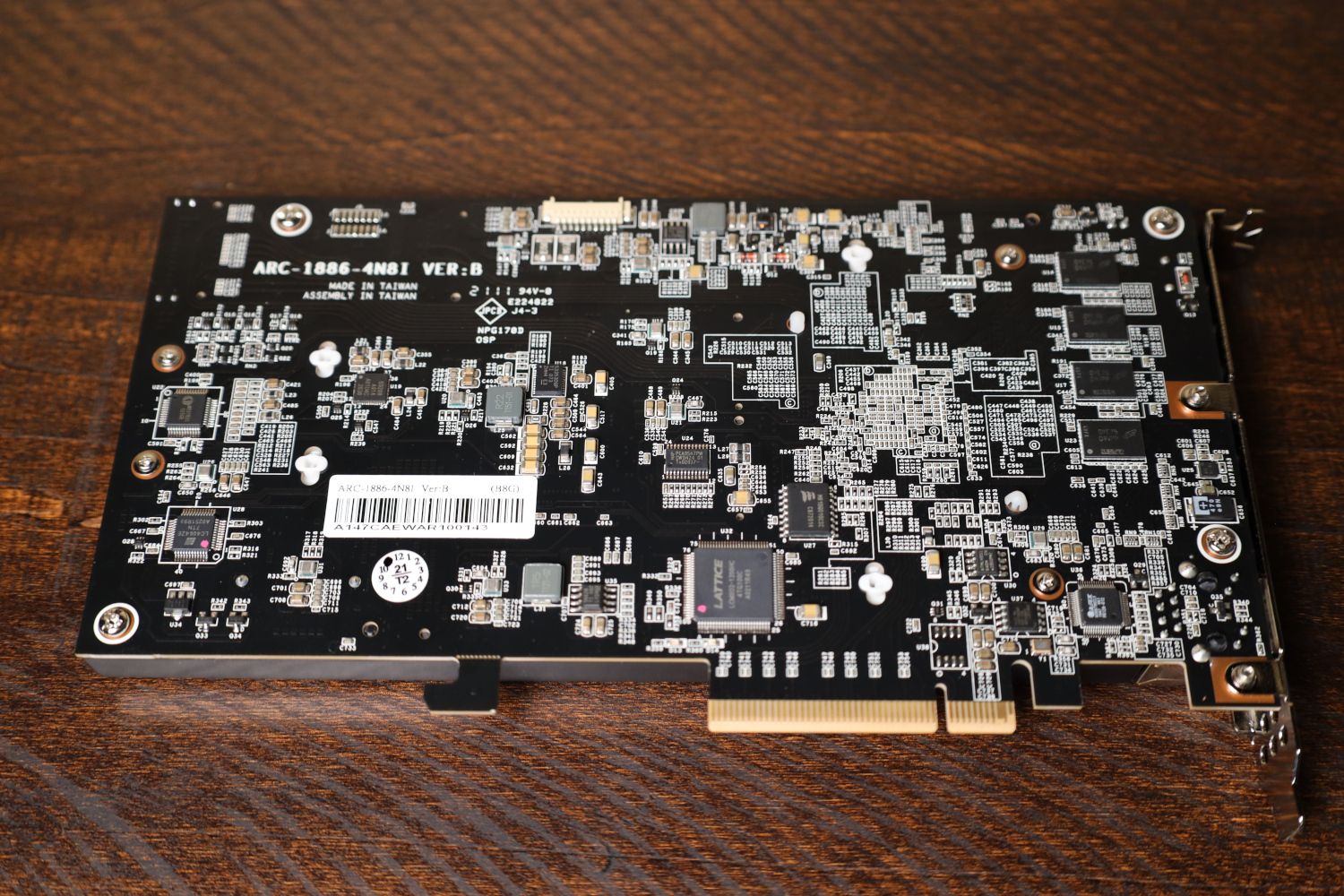

The rear of the card is very dense with surface mount components, and half the DRAM cache is on the back as well.

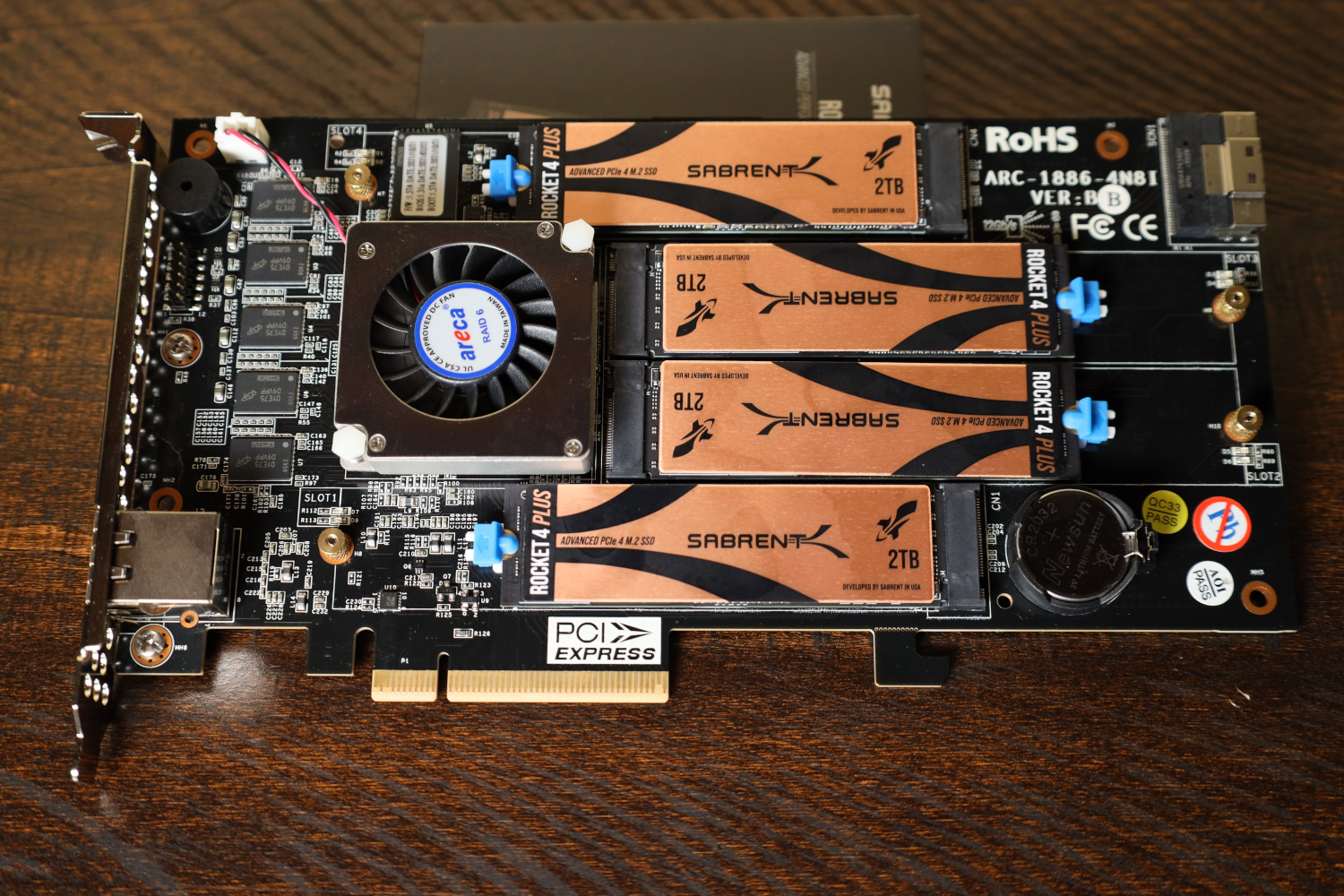

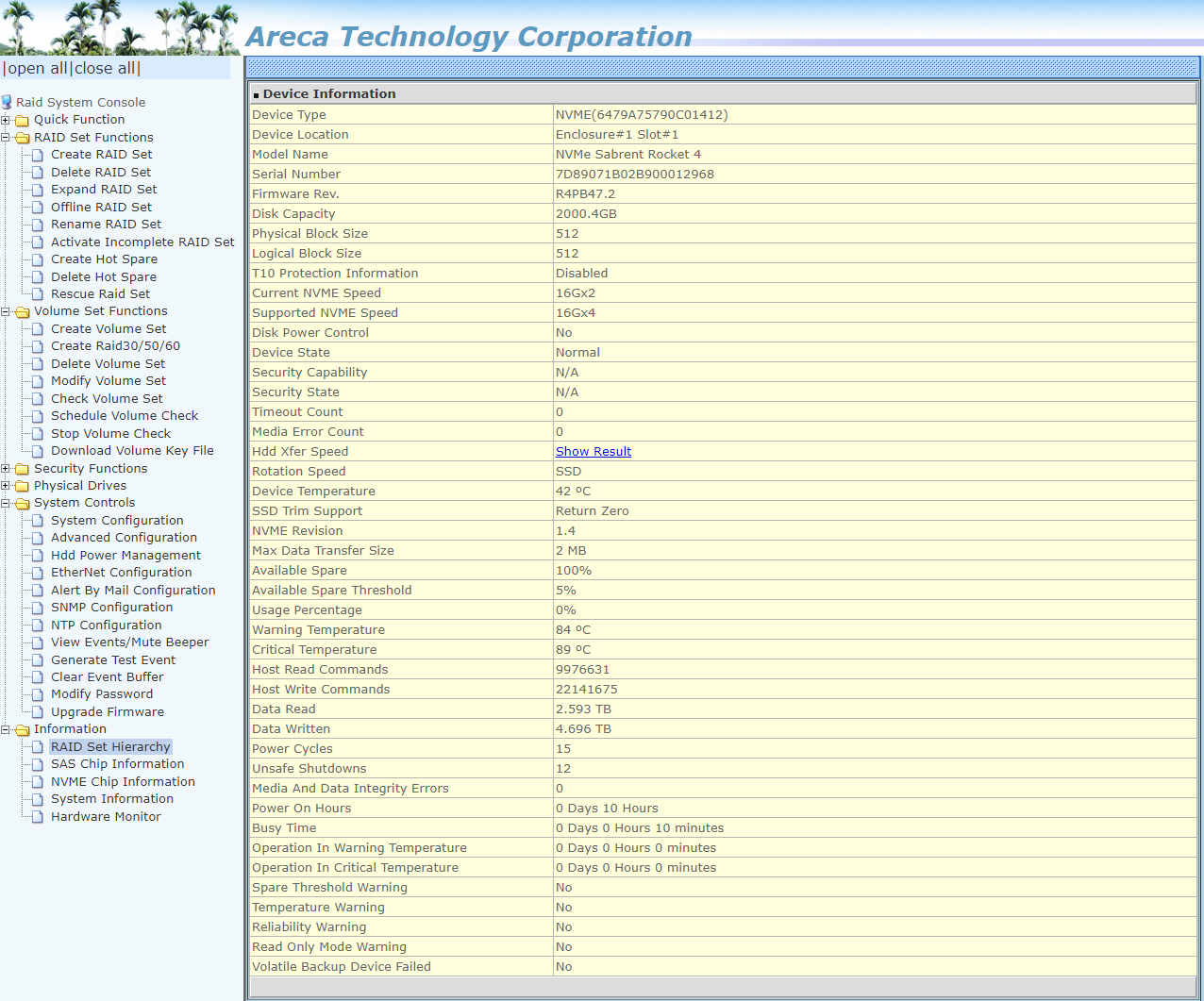

For testing, this card was primarily populated using Sabrent Rocket 4 Plus 2TB drives. Some testing was also done with PCIe 3.0 SSDs installed, specifically Samsung 970 EVO Plus 2TB models. More on that later.

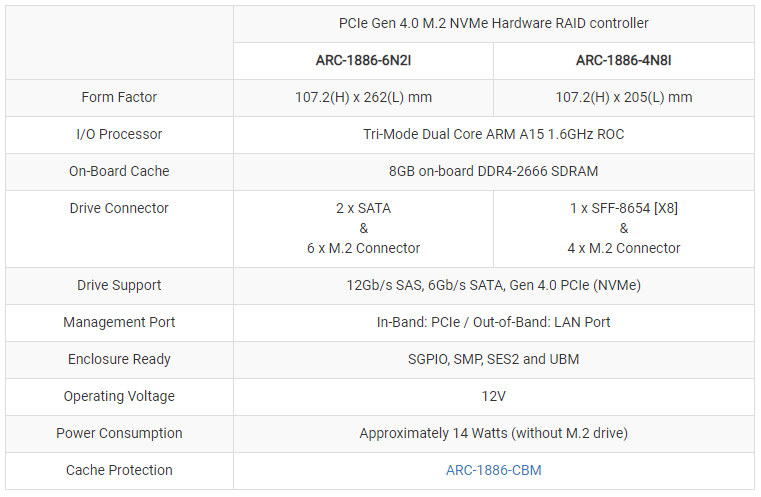

Areca ARC-1886-xNxi Series specs

The ARC-1886-4N8i I have today is part of the 1886-xNxi series, of which there are two models.

The 6N2i card has 6x M.2 ports and only support for a pair of SATA drives, while the model I have on hand eschews 2x of the M.2 ports and the SATA ports for a SFF-8654 X8 (SlimSAS 8i) connector. Other than these port differences and the physical dimensions of the cards, the specs are identical between the two models.

For me, the 1886-4N8i was specifically chosen for the 8i portion; the server this card is destined for has 8x hot-swap 3.5″ drive bays that are going to be populated by spinning disks for archival storage purposes, and the 1886-4N8i allows for that scenario.

Since this is a full hardware RAID solution, driver support is fairly broad. Windows, MacOS, Linux, FreeBSD, unRAID, XenServer, VMware, and more are all supported. Testing for this card was done in Windows, but now that testing is done, it is installed in a VMware ESXi server and is working fine.

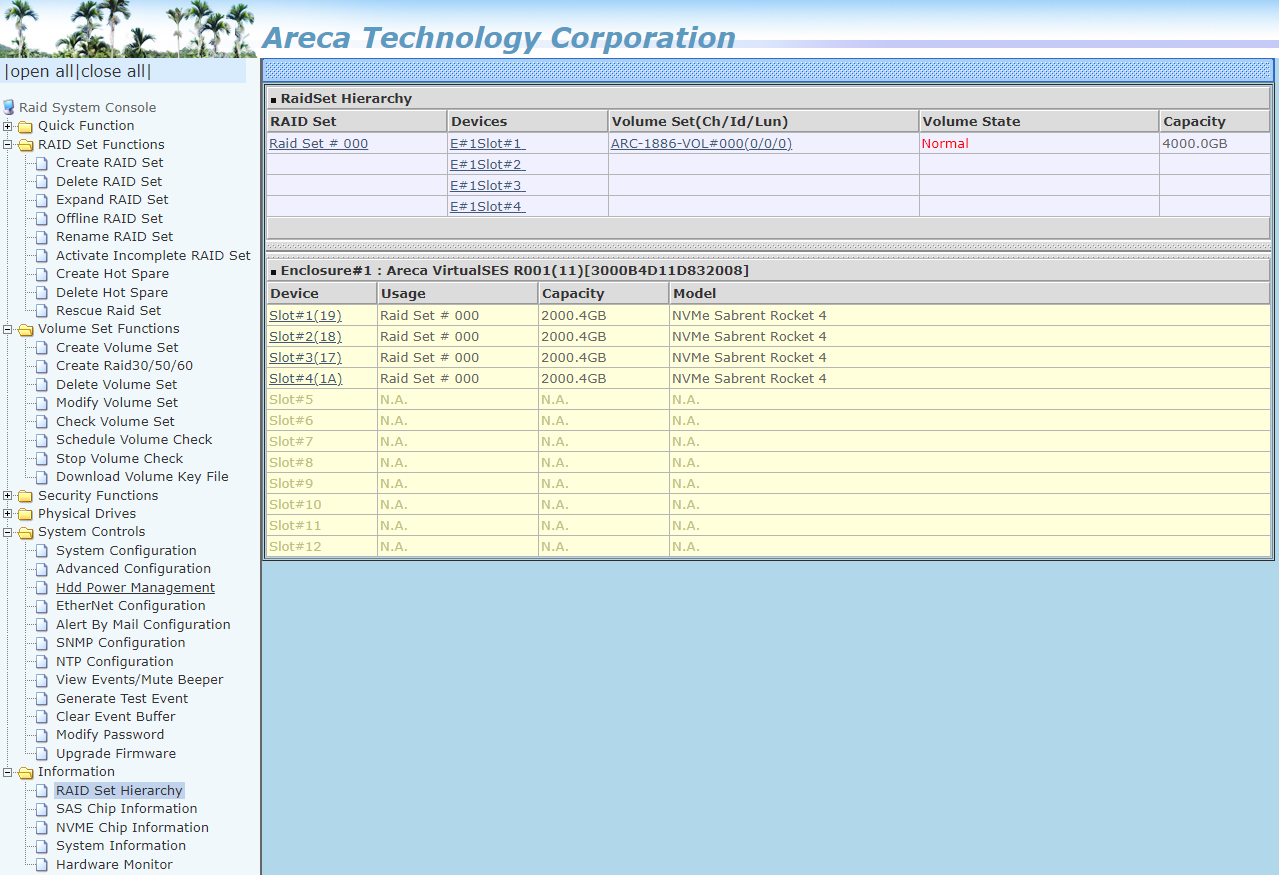

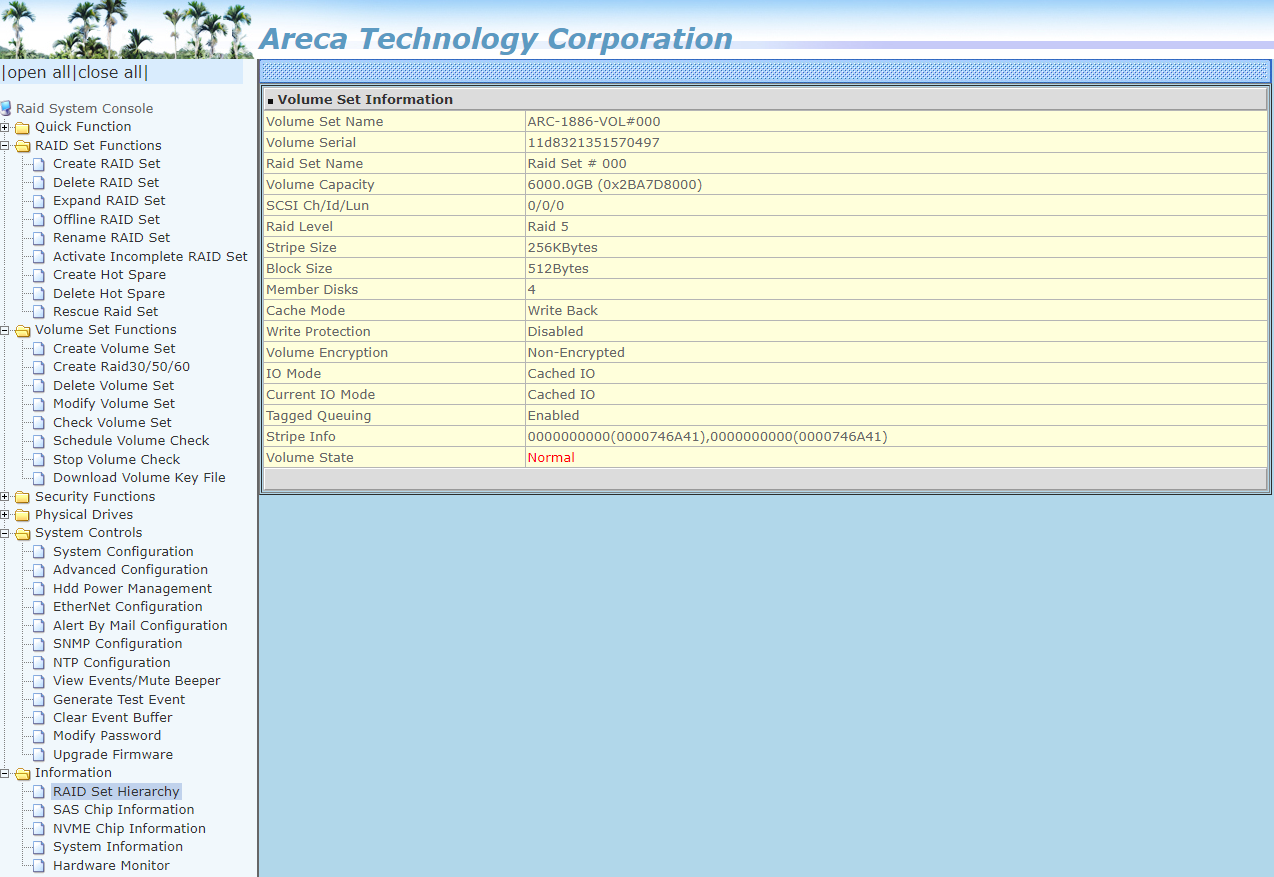

Areca Management

I am a longtime fan of Areca RAID cards for one specific feature; their dedicated out-of-band management interface.

Yes, the Areca RAID card has a network port. On that port runs a webserver, and from that webserver you can control everything about the card.

You can perform all the normal actions one might want on a RAID card; creation and deletion of arrays, configuring hot spares, volume checks, recovery actions, and more.

In addition – and this is my favorite part – the out-of-band management interface can be configured to directly send alerts in the case of problems. Areca cards I set up are configured to send emails to my ticketing system in the case of drive failures, and this alerting functions completely independently of whatever operating system happens to be installed on the server at the time.

Also included in this interface is all the SMART-type information that you could want on your connected drives, including data written totals and spare area availability for SSDs.

Test System Configuration

My basic benchmarks were run using my standard SSD test bench.

- Motherboard: ASUS PRIME X570-P

- CPU: AMD Ryzen 9 5900X (12C/24T)

- RAM: 2x 16GB DDR4-3200 UDIMMs

In addition to my test bench, this card eventually got installed into an EPYC 7302p system on a Supermicro H12SSL-NT running VMware ESXi 7. I did not run any benchmarks in that configuration, but it worked just fine there.

Areca ARC-1886-4N8i Performance Testing

For testing purposes, the ARC 1886-4N8i was equipped with 4x Sabrent Rocket 4 Plus 2TB SSDs and put through a small set of basic tests in both RAID 0, 5, and 10. In addition, these tests were also run with 4x Samsung 970 EVO Plus 2TB drives installed. As with the SSD7540, the point was not to comprehensively catalog the performance of the Areca card. The performance you encounter will very much depend on which drives you choose to populate the card with, along with the RAID level you choose. I simply wanted to give some kind of a general idea as to the performance you might encounter.

Areca 1886-4N8i + 4x Sabrent Rocket 4 Plus 2TB Performance

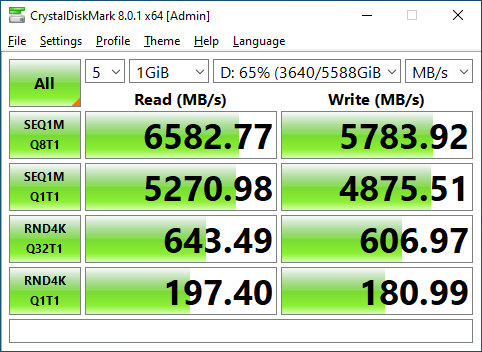

RAID 0 Performance

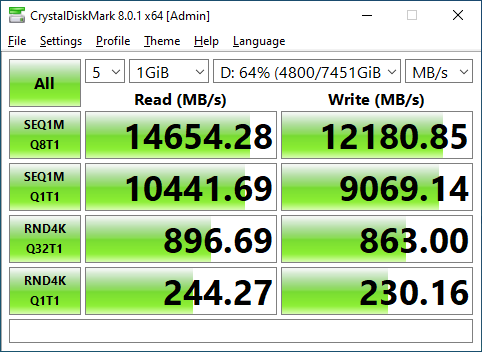

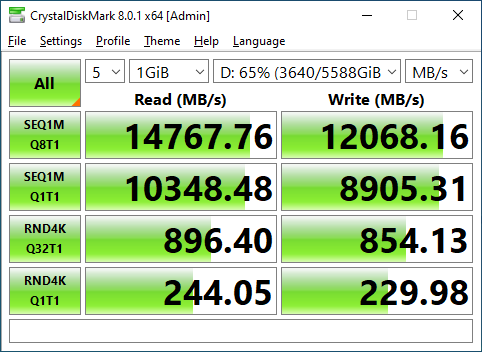

The Areca ARC-1886-4N8i has a PCIe 4.0 x8 host interface, and right out of the gate, the sequential read performance is bumping right up against that limit in RAID 0. Write performance is not far off the mark also at more than 12000 MB/s.

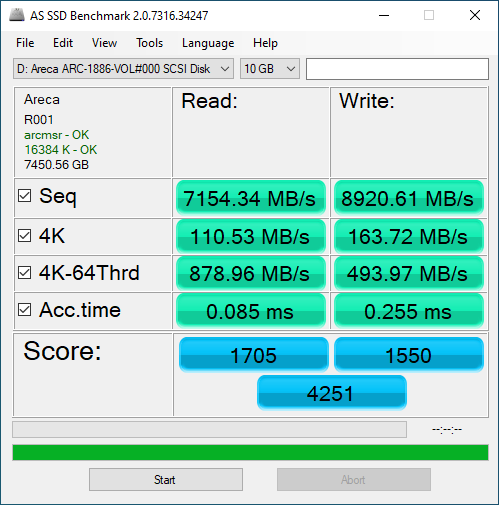

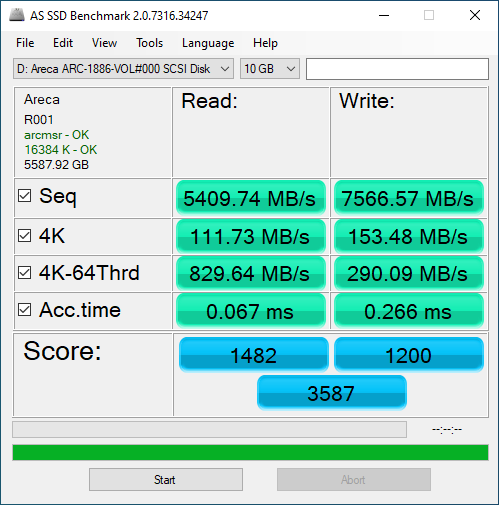

AS SSD, on the other hand, did not favor the Areca ARC-1886-4N8i. Despite operating in RAID 0, the AS SSD score with the 4x Rocket 4 Plus 2TB drives falls significantly short of just a single Rocket 4 Plus drive operating on a native M.2 port on my Ryzen test bench. While the ARC-1886-4N8i performs better than a single drive in sequential and single-threaded 4K random workloads, it is significantly worse at highly threaded 4K random workloads. I do not know if this is some kind of driver interaction or if the card/ Broadcom SoC is actually this much slower than a single SSD, but that is what we saw.

RAID 10 Performance

Moving to RAID 10, the Areca ARC-1886-4N8i is similar to the RAID 0 performance. Considering that there should be (foreshadowing) x16 lanes of Gen4 SSD on board and the card has a PCIe 4.0 x8 host interface, it is good to see performance hold strong and lose not even a single step in RAID 10 compared to RAID 0.

The best that can be said for the AS SSD performance in RAID 10 is that it is unchanged from the performance in RAID 0.

RAID 5 Performance

Finally, we come to RAID 5. This is something you likely would not expect the HighPoint SSD7540 we reviewed to do.

The Areca ARC-1886-4N8i once again gives similar results to what we have seen before. Performance at RAID 5 is nearly identical in CrystalDiskMark to what it was in RAID 0. CrystalDiskMark likes this card and does not care one bit about what RAID level you are working on.

AS SSD loses a small step compared to the RAID 0 performance, but again I am not quite sure what to make of the results on display here.

PCIe 3.0 SSD performance testing with Samsung 970 EVO Plus 2TB

In addition to all the testing with the Rocket 4 Plus drives, I wanted to see how well this card would perform when equipped with PCIe 3.0 drives. Testing on the Gen 3 drives was only performed in CrystalDiskMark.

RAID 0 Performance

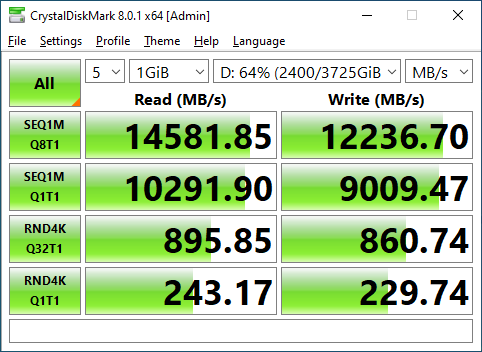

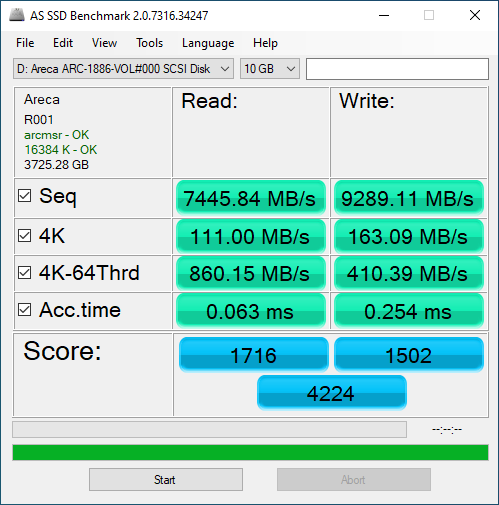

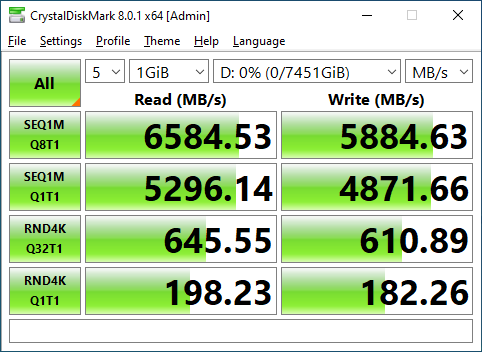

Initially, I was confused by the performance turned in by the Areca ARC-1886-4N8i when fully populated with PCIe 3.0 x4 SSDs. In theory, four PCIe 3.0 SSDs in RAID 0 can fully saturate a PCIe 4.0 x8 link so performance should have been similar to the Sabrent drives; instead it is half.

After some digging, the answer became clear; the Areca ARC-1886-4N8i only has a PCIe 4.0 x2 interface for each physical M.2 slot. So when populated with PCIe 3.0 drives, they each individually operate at PCIe 3.0 x2. As a result, the use of Gen4 SSDs is more important on the ARC-1886-xNxI line than might have been apparent from the specs.

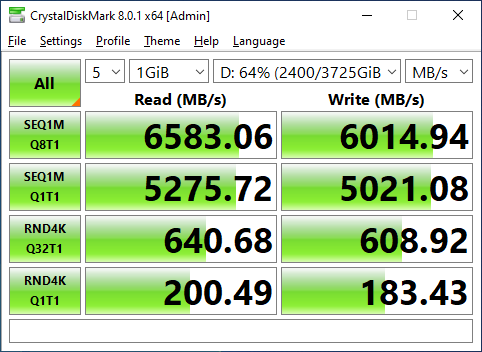

On the upside, when equipped with PCIe Gen 3 drives, performance across all three tested RAID levels remains consistent. These drives are just as fast in RAID 10 as they were in RAID 0.

And RAID 5 presents again essentially the same performance as RAID 0 and 10. We are held back by the individual Gen 3 x2 interfaces for the drives.

Final Words

The Areca ARC-1886-4N8I is a very interesting product to me personally. I am a longtime user of Areca’s 1883 line, cards like the ARC-1226-8i and the ARC-1883i, for 8 (or more) SATA/SAS drives in a RAID array. The ARC-1886-4N8I can function as a drop-in replacement for both those cards while simultaneously allowing for an onboard 4x M.2 NVMe array.

On the other hand, both the Areca 1226-8i and 1883i were low-profile cards, but the 1886-4N8I is full-height. There are times when I have installed the 1226-8i and 1883i specifically into slots for their low-profile capabilities, so I wish there were a member of the 1886-xNxI lineup that was low-profile; perhaps a 2N8I variant or something. I also wish there was a PCIe x16 version available, but the x8 link is a limitation of the SAS3916 controller.

That is all wishful thinking, though. For now, the card on hand is the ARC-1886-4N8I, and it does exactly what it claims to do. This is a hybrid between a traditional tri-mode RAID adapter and a M.2 RAID card, and it performs well at both tasks. I bought this card sight unseen based entirely on my previous experiences with Areca cards, but after testing, I find myself quite satisfied with my purchase.

Again, at $1.300 this is a niche product. It doesn’t offer that much for most uses.

One can use that money on EPYC board and have a couple of dumb passive 4X PCIev4 M.2 adapters for higher transfer speeds at lower cost. Cheap single socket EPYC board easily host 4 cards, each with 4 M.2 drives at full bandwidth over 4×16= 64 Pciev4 lanes.

Yes, these cards can do RAID5/6 and have a bit of RAM for cache, but that is peanuts, compared to what even the cheapest EPYC can do…

So, where could it have value? Only in some space constrained situations, if even that.

I’m always blown away when I see a review of a hardware RAID solution and the reviewer fails to benchmark it in a degraded state – both with a drive missing/disabled and during resilvering operations. It’s hard to plan for failure when you don’t know what failure looks like, and at $1300 I’d like to know that it’s not going to behave pathologically when, not if, there’s a failure.

Looks like the AS SSD tests were 10gb, which is larger than the 8gb dram cache on the card, might be why the performance falls off. Would be interesting to see a crystal disk test of the same size.

I’ve nagged at Areca to make something like this for years. And now it’s finally out, I feek it’s a bit too little, too late.

But I’ll consider it.

However … the benchmarking on this was highly lacking.

You should hire me as a writer. :P

I thought this was very cool and interesting. Thank you. I’m looking forward to more such PCIe cards to consolidate NVMe SSDs. This was particularly interesting with the out of band and the added slimline SAS8654 8i.

This was a hardware performance review, not a storage performance review. There is a difference.

This would be great for those of us upgrading storage servers and wanting to retain out sad expanders/spinning rust drives. But at that price it’s easier to just separate the functions into two different cards, eat another pcie slot, and call it a day.

I’m interested in adding a 20TB M.2 RAID 5 array to a Windows 10 computer used for heavy graphics. Like Will, I’ve been using multiple Areca RAID controllers in several machines for over 15 years and have experienced no issues. I was interested in purchasing the Areca ARC-1886-6n2i card and 6 4TB M.2 cards. I was told by an Areca sales rep who submitted my questions to Areca’s engineers, that given the x8 interface the use of Gen3 vs Gen4 M.2 would not make a performance difference. I’m thankful to Will for doing this review – as it clearly shows there is a difference. I’d like to thank Will for an informative article – I really found it useful.

I also found some of the comments interesting. Since none of the other common RAID cards on the market support on-board M.2 RAID 5, I didn’t think there was really a choice for me. I already have a desktop computer – I’m not looking to replace it with a SuperMicro rack unit – so I did not really understand the alternative that Gertrude Bigone suggested. I also did not understand the comment that John Aasen made as “..too little, too late”.

If the RAID firmware on the Areca 1886 controller is similar to the 1883, RAID 10 Volumes are actually [0,1], not [1,0]. I have been able to reconstruct RAID 10 Vols from just 2 drives. As for performance on a degraded RAID 10 Vol, minus 1 drive, in general sense little depreciation.

In RESCUE mode (during boot), recovered a RAID 10 Vol with just 1 drive remaining, only to be destroyed by scandisk, the Vol having been mounted to Win, from not reacting in time, to let the boot into Win complete. I have experienced that if one interrupts a RAID 10 rebuild by means of Win reboot, cannot from the F6 start up screen, get the controller to resume rebuild.

Would a DRAM-less SSD adress the card´s DRAM as Host Memory buffer?

Does this card benefit from the SABRENT´s on-board DRAM buffer?

TRIM supported in all RAID configurations?

Why only pcie x8?

4 mvme need a x16 lane.