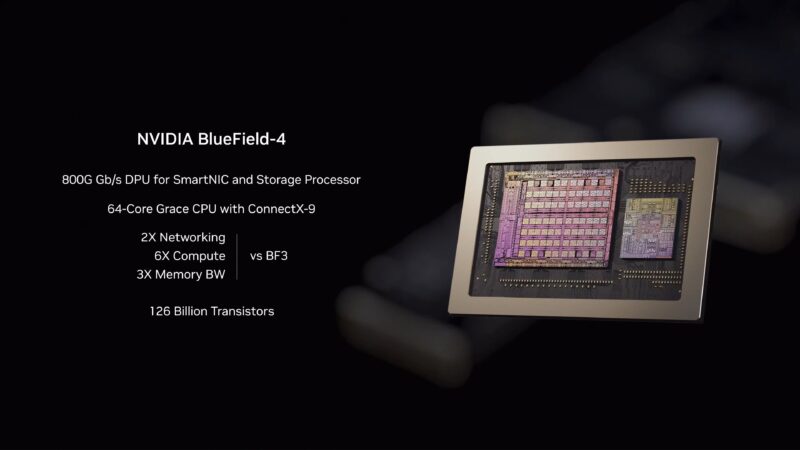

NVIDIA BlueField-4 DPU

The NVIDIA BlueField-4 DPU was also in the Aivres booth. While this looks like a standard PCIe card, therei s a lot more going on here. One example is that you can see the yellow nozzle caps on the rear of the card, which are for liquid cooling.

In this generation, we get double the network performance with 800Gbps NVIDIA ConnectX-9. That is the smallest increment, however. BlueField-3 was a major increase in memory bandwidth over BlueField-2. BlueField-4 triples BlueField-3’s memory bandwidth.

The compute side is a 6x increment. In the BlueField-2 generation, the compute was relatively paltry. Now, it is going to rival many servers that are deployed today.

Next, let us get to the HGX B300 rack.

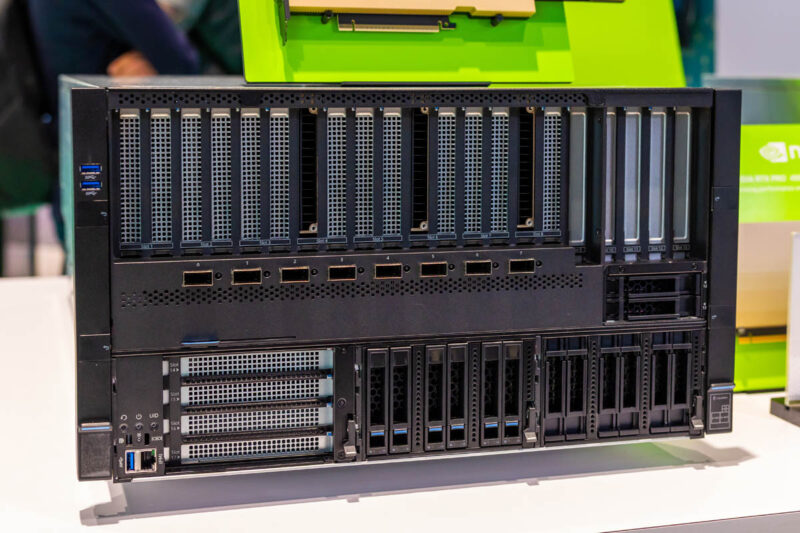

Aivres NVIDIA HGX B300 Rack

While the NVIDIA NVL72 racks are always neat to see, there was an NVIDIA Blackwell Ultra rack in the Aivres booth. This is a liquid-cooled rack based on the 8x GPU per node HGX architecture.

Using liquid cooling, Aivres fits six of these HGX B300 systems into a single rack for a total of 48x GPUs. This system architecture with eight GPUs per node has been around for a long time. We actually reviewed the Hopper version in our Aivres KR6288 NVIDIA HGX H200 Server Review.

Again, you can expect large liquid cooling hoses at the rear of this rack.

This type of rack is useful for many since it is a well-known topology that has been used for generations. Also, this is a lower-density option than the NVL72 racks, which fit better in some data centers that cannot handle higher-power racks.

Next, let us take a look at some of the other PCIe servers that we saw in the booth.

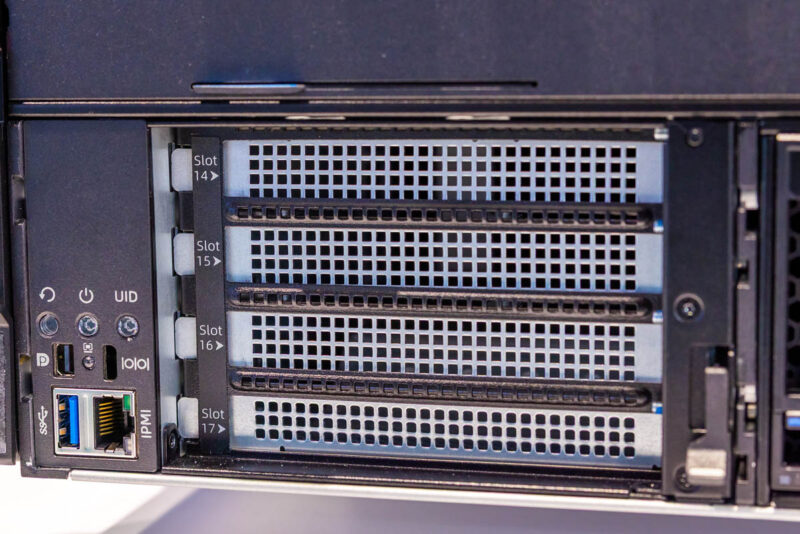

Aivres PCIe Servers

Another neat platform we saw was the Aivres KR6278. This is a server designed for cards like the NVIDIA RTX Pro 6000 Blackwell server edition GPUs. With the new generation, the PCIe GPU platform gets a huge upgrade. You can see that this has the NVIDIA ConnectX-8 PCIe switch PCB, and so there are eight 400Gbps ports on the front of the server. The traditional PCIe switches are out of the design which is a major shift in the architecture.

Aivres is taking a different approach, adding more PCIe slots to the front of the system. Usually, we see 1-4 slots on the front, but this system has a lot of PCIe slots, especially given the ConnectX-8 board integration.

Storage is also up front so that you can access most major components for storage, compute, and networking from the front of the chassis.

This may seem like a small point, but there are additional slots on top next to the ConnectX-8 PCB’s slots. In many MGX servers on the market, this area is reserved for power supplies. In Aivres’ design, you get more PCIe slots.

Moving to the rear, we get a very simple design with the power supplies and fans. Having the power supplies and fans in the rear and everything else on the front is very different.

Next, let us get to the NVIDIA Vera compute modules that we saw in the booth.