A few weeks ago, I was able to sit down with Greg Matson, SVP, Head of Products & Marketing at Solidigm, and Kevin Deierling, SVP of Networking at NVIDIA, to talk about how AI is impacting storage. Solidigm is hosting the video, but I thought I would give our readers a pointer to the discussion. Kevin even put on his OpenClaw/ NemoClaw claws at one point.

Storage for the AI Factory Era: A Discussion

If you want to find the interview, it is on YouTube here:

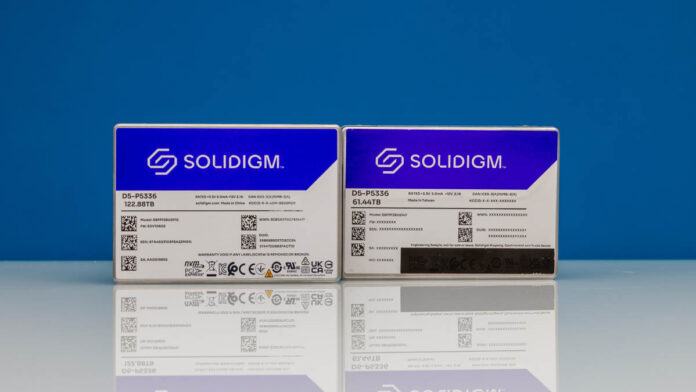

There are some neat tidbits in there behind NVIDIA CPX, liquid-cooled SSDs, and NVIDIA BlueField-4 DPUs. Something I learned while doing this is how an entire wafer’s worth of NAND goes into a single SSD today. Here are some of the key topics we covered:

- The Shift to Agentic AI Demands More Storage While 2025 was focused on AI inferencing, 2026 is projected to be the year of AI “agents” that perform complex, multi-step reasoning. Because these agentic workflows require AI to “think,” plan, and retain information over increasingly large context windows, there is a massive new demand for memory and storage.

- A New “Middle Tier” of AI Storage To handle this demand, the industry is creating a new tier of storage. Traditionally, systems relied on either extremely fast but expensive Direct Attached Storage (like HBM memory, which costs $10,000 per terabyte) or very high-capacity but slower Network Attached Storage. Flash storage is being used as a tier between those two extremes.

- KV Cache Optimizes GPU Performance KV cache has a massive impact on AI Factory performance because it saves a tremendous amount of computation, for example, when ingesting large amounts of data and using it as a source for generating future reports. Since original documents can always be recomputed, this tier of AI storage does not need to strictly adhere to legacy rules requiring perfect durability and fault tolerance, allowing for further design optimizations.

- “Extreme Co-Design” and Liquid Cooling Data centers are heavily constrained by physical space and power limits. To maximize the footprint of productive GPUs, NVIDIA and Solidigm employ “Extreme Co-Design,” working together on everything from thermal management to electrical delivery. Liquid-cooled SSDs are an example of this, and we showed them off both in the Solidigm Liquid-Coolable NVMe SSD Design as well as when we saw some of the next-gen NVIDIA Vera Rubin racks.

- Future Outlook of AI Factories In the next three to five years, AI intelligence will be integrated everywhere, resulting in factories of all sizes. That will range from small factory robots and cars to massive gigawatt data centers. A single gigawatt AI factory could require up to 25 exabytes of flash storage to be optimally efficient. Ultimately, the key takeaway is that GPUs are only part of the equation.

During the discussion, Kevin also noted that KV caches are a different tier and type of storage in the data center, fundamentally distinct from other types of storage. KV caches help reduce compute demand in AI clusters, which anyone who has run local models and turned on/off the KV cache can immediately see. These caches need to be high-performance, but from a resilience perspective, if the data is lost, then it can be recomputed. That makes it a different storage tier than most data, which trades speed and latency for resilience.

Final Words

While this one isn’t the hands-on hardware content we usually do (though Greg brought a NAND wafer and SSD to the room we were filming in), it might be one you want to listen to in the background. Check it out embedded above. I will likely be doing more interviews and panel discussions as folks have been asking me to do them for some time.