Continuing the series regarding how ServeTheHome is moving from the cloud to its own hardware, it is probably time to look at some of the costs involved. For the reasoning behind the latest infrastructure project, see Falling From the Sky – Why STH is Leaving the Cloud. The next step was in figuring out what the requirements would be for the next generation ServeTheHome infrastructure. In Part 2 of the Falling from the Sky series, we looked at the requirements for this site with a WordPress back end and vBulletin forums and what was guiding the analysis regarding the future state architecture. Since we have received many comments asking for cost breakdowns, that will be the focus of this article. Think the cloud is cheaper? For batch jobs or loads that run under 30% of the time, it works well. We hear in the press how companies save piles of money by moving to the cloud, especially for batch processing. There the model is spin up elastic compute nodes, upload data to the compute nodes, and generally send back an answer orders of magnitude smaller than the original data set. This works really well in the cloud since the majority of the traffic is incoming (data upload) and the resources are only used for batch processing (<30% usage.) The price analysis below is based on real-world usage of a web site where there there is much more outgoing data versus incoming data (~30:1 ratio) and one needs 100% up-time. Let’s take a look at the cost breakdown.

Costs of Amazon EC2 Cloud, VPS, Dedicated and Colocation Options

Evolving STH meant moving from a very large shared host to a VPS once shared hosting performance was not cutting it. That worked at best OK but there were still issues. Moving to the Amazon EC2 cloud made sense. I did note in that earlier piece that a new server was built every few months for colocation.[pullquote_right]Approximately every 60-90 days I build out a dedicated server for STH…. At the end of the day, I always come to the conclusion that I do not want to be responsible for changing hardware in a remote location in the middle of the night. STH September 2011[/pullquote_right] The trigger was never pulled and even with some intermittent downtime, everything ran smoothly until late 2012 when I/O constraints started showing up in the Amazon cloud and bandwidth costs rose with visitor counts. As with any good decision, it was time to break out the spreadsheets and figure out where to move next, a new Amazon EC2 cloud architecture, VPS, dedicated hosting or colocation options.

The trick here is that pricing is very difficult to get. Amazon has their pricing calculator which is great, albeit a bit scary at first. When one first signs up for the Amazon cloud offerings, they typically do not know such has how many file I/O requests will be made per month. Although it is complex, having 18+ months of hosting data for the site helped quite a bit. Although some of this is a bit of estimation, it is based off real world LNMP WordPress hosting.

Hosting Cost Comparison Assumptions

A few assumptions:

- Since Amazon has a pretty decent firewall, I am going to make this a requirement for all solutions. This is not going to be a huge firewall solution, but Amazon does have a firewall and it is advisable not to leave servers completely exposed.

- 3 year reserved instances are used with Amazon EC2. The reason for this is that it is generally less expensive to buy 36 month terms than two consecutive 12 month terms.

- Prices had to come from suppliers with sufficient scale. The site has been around since 2009, so providers around for only six months, colo providers with 1/4 cabinet or VPS providers with a server or two were not considered. That did raise costs a bit, but there is a big difference in the viability of a provider that has scale. E.g. a provider that has their own data center is significantly different than a provider with a single server. The goal was not this:

- For colocation costs, we are using actual dollars spent on hardware.

Hosting Cost Comparison Summary

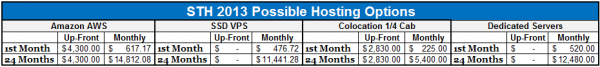

Here is the quick summary of costs we came up with for the various options. We will get to detail afterwards, but here is the breakdown:

Now, here is the interesting thing, the VPS and the dedicated server options are the really inexpensive on the setup side. While many articles correctly state that cloud offerings do not have up-front costs, the downside is that hourly rates are prohibitive. Case and point, the high-memory extra large instances used for the main site cost $0.45/ hour per month to keep online when they are on demand and $0.07/ hour if they are purchased on a 3 year heavy utilization instance. On average, that is $278.16 monthly per high-memory extra large instance by using a heavy utilization reserved instance for web hosting. As we would expect, colocation had high initial costs, but monthly costs were the lowest because one converts otherwise monthly charges for up-front fixed costs.

Hosting Cost Comparison Detail by Solution

Doing the straight comparison was difficult to say the least. Pricing models and included features differed by provider. Many VPS providers use what amounts to an underlying cloud-like architecture, so there are no assurances that your VPS instance is running on a second physical machine and one can be at the mercy of shared bandwidth and I/O throughput during peak times. Dedicated providers often had costly external add-ons such as for firewall appliances, second NIC ports being used per server and cross connecting servers. Colocation providers varied significantly in their base models plus add-ons and one needs to do extra diligence on everything from physical access to vendor reputation since they are essentially providing space for your expensive equipment. All told, the below cost comparisons are what I could find reasonably coming close to the original requirements.

Jeff Atwood of Coding Horror, StackExchange, StackOverflow and etc. fame recently wrote an article about building his server for colocation and linked to STH. @codinghorror – returning the favor after reading your book, Effective Programming: More Than Writing Code. Looks like he found similar constraints. Nice to know we are in good company.

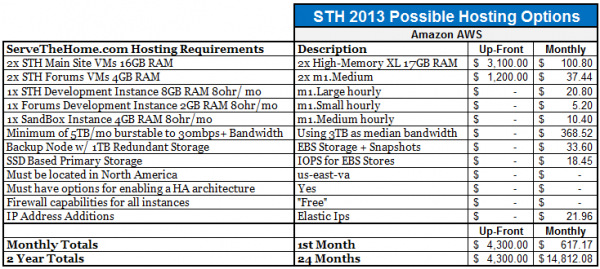

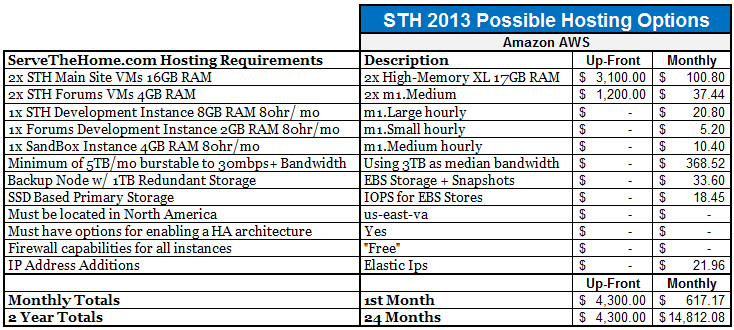

Baseline Case: Build out Amazon EC2 Cloud / AWS Option

The baseline case was to do the familiar, build out on the Amazon EC2 cloud infrastructure. Monthly costs were maintained by using heavy utilization reserved instances. The other side of this is that it led to significant up-front costs for the three year reserved instances. The requirements did not exactly fit the AWS tiers, but they came close enough to where we had a likely configuration. Here is the quick breakdown.

Here we see a few factors in play. First, we do not have a very elastic architecture. We are not using mirrored Amazon RDS databases, HA load balancers and on-demand or spot instances to handle usage spikes. That is a great setup but is a bit complex to maintain and spawning new instances for heavily cached content is not always the easiest option. In fact, we are using a bare minimum number of instances. To keep costs down, these are all housed in a US-East region so there is no inter-zone data transfer.

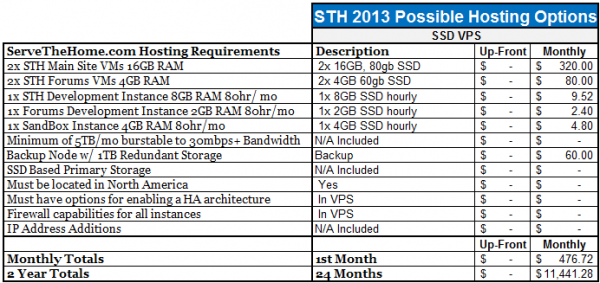

Case 2: Use SSD based Virtual Private Servers

As mentioned previously, we did want to eliminate storage bottlenecks so we priced out the RAM and SSD requirements for the site. Some providers also offered “cloud” like billing solutions for these, such as DigitalOcean mentioned in a comment to a previous post in this series. There were challenges, but costs were also significant.

Here there are bunch of considerations such as the fact that the hardware would be shared with other customers. Furthermore, there are no external firewalls, load balancer, and etc options. Moving to a SSD would likely help disk performance, but it also means that instance storage is generally local and so bringing up an instance on failed hardware can take much longer. This was not a bad option but there are so many VPS vendors out there that it is difficult to wade through the evaluation process. Compared to Amazon, more diligence is required regarding the hosting company’s viability as an example.

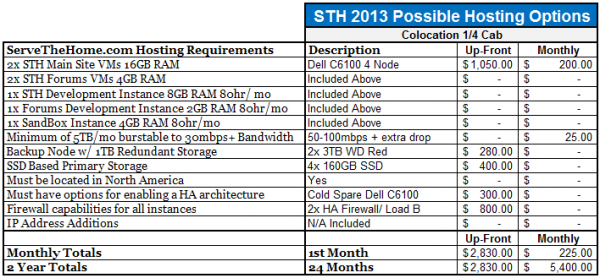

Case 3: Build out Colocated Infrastructure

These costs are very close to what the actual costs were going to be. We originally started with a $300 up-front budget and $50/ mo but that did not work with our requirements. Although the selection process was tedious, we did manage to hammer out a plan that would work for the site.

One can see with the above that this option had the most complexity, by far, but also is considerably over-built. For example, each node has two Intel Xeon L5520 CPUs and 24GB or 48GB of RAM (see a thread on the Dell C6100 XS23-TY3 platform here.) This is known to be over-built by a lot but in the end, has a 2 year TCO of under $10,000. One thing that makes remote hands a bit less expensive is having the infrastructure able to easily withstand server failure. The cold spare serves as a donor chassis should anything small or large fail and can be used to move the entire set of motherboards and drives to in under 30 minutes should a component such as the midplane fail. Labor costs were not added and can be costly, however this seemed to make sense as there is a fairly large margin by which this solution proves to be the least expensive.

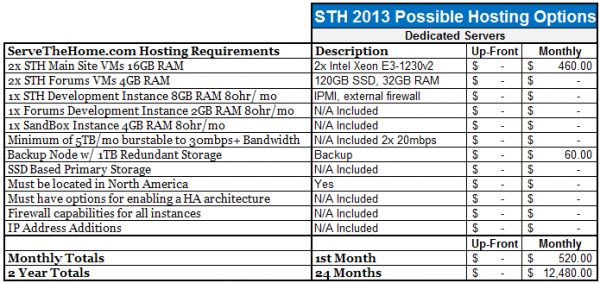

Case 4: Use two Dedicated Servers

The idea of using two dedicated servers was very strong at first. One prime reason was because servers could be easily sourced from different vendors inexpensively and added to the infrastructure. Unfortunately, that is still not overly easy to setup and maintain. There were a lot of add-ons that had to be included to meet the requirements.

Frankly, this option was discarded early on in the process due to the fact that it would be harder to put a firewall appliance in front of the installation and there were issues with cross connects, single NIC connections to the WAN and other issues that made it more costly and harder to manage. Given, if we had the need for a dual Xeon E5-2690 machine with many SSDs in RAID 1 dedicated servers would make more sense.

Conclusion

In the end, it was time to build out at a colocation facility. It should be noted, that the colocation costs are very close to the real costs of doing the project, and there were less expensive facilities out there. More on that vendor selection for the next part of the series as well as the hardware and surprises encountered in planning and operationalizing this project.

Wow! Real world usage. Looks like you’re gonna be overbuilt tho.

Can’t believe how many peeps are stuck on cloud is answer for everything.

In your analysis, I see overbuilt infrastructure in one DC could be replicated at a second DC and still cost less than Amazon, even leaving a few $K buffer for remote hands or flights to see equip.

breathless anticipation of your build notes.

are you shipping to the DC or going to hand deliver?

Very nicely reasoned Patrick.

As presented, your choice to abandom EC2 is a no-brainer. Of course if you priced your hardware as new equipment instead of used then the upfront costs would have been dramatically higher.

100% agree dba. With that being said, STH is currently running on a share of an Intel Xeon E5430 CPU for the main site and an Opteron 4122 for the forums. Not exactly new hardware either.

w/e what about if you needed 2x or 3x as many servers at peak and only that many during non peak? you said in your last article that peak is 2x off peak. that’s why cloud is better. amazon even has it graphed on thier site.

i like – I think that cost model is scalable if you need only a 2x to 3x (off) peak scale. Web is usually 10 or more hrs on peak so still need a lot of compute time for xtra Ec2.

One aspect with the cloud is that if you go from 1 user to 100m users in two months it is hard to plan for that type of growth without the cloud.

which provider are you going w/? I thought colo costs vary often. Did you do a vendor selection? Only US or did you look at global DCs?

Amazon’s other benefit is you can spin up globally without needed to contract multiple datacenters – manage hardware globally and such.

Can’t wait to hear more.

Accepting sponsorship for the colo costs? We might be interested.

Can I just put 1 server at my house?

This is for a dating mobile app similar to Tinder.

One thing I worry about is possible DOS.

In the case of such an app, I think its less of a threat.

However, how is this guarded against?

In colo, what firewall etc would you put up, and how might one do that at home-office?

Thanks!

In initial stages of product release, before moving onto a colo, what about:

Putting 1-2 server at a home-office in Israel, and possibly 1-2 in home-office in Los Angeles or Austin, managed by family there?

This is for a dating mobile app.

One thing I worry about is possible DDOS.

In the case of such an app, I think its less of a threat.

However, how is this guarded against?

In colo, what firewall etc would you put up, and how might one do that at home-office?

What other bandwidth issues might happen at home-office? Say you are in Kansas and have gigabit internet (nominally 1Gb up/down), shouldn’t that serve you pretty well for the cost of 1 home line (very inexpensive ~$100/month)? Actually, I see I have just 30Mb/1Mb down/up here, but I can look into getting more up. My brother has a home office in Austin with very large bandwidth (pro gamer gaming-house)

Thanks!

DDoS attacks used to disrupt and disable my brother’s home ISP service and he’s have to get a new modem every time. I’m not sure if because the DDoS would be ongoing, or if because the ISP would just shut down the line after a few minutes of the attack.

In my previous comment, I meant to ask about what differences there are in ISP service at home, office, colo, EC2, etc.

Would having firewall appliances at home likely help thwart the attack?

Hi Gary – may be a better question for the forums where many have experiences they can share: http://forums.servethehome.com