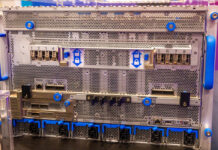

Building a dual processor (DP) server is not a tough task, but it does have a few considerations for anyone looking to build one from scratch. I was building a DP platform for a few reviews and decided to take the opportunity to take some pictures. This is a quick build guide to a dual Xeon system with three add-in 10Gb NICs and three (additional) eight port controllers.

Chassis and Cooling

At first I was planning to use a Supermicro SC933T-R760B that I purchased and reviewed here in early 2011, however I did not want to be limited to using 6 of the 7 expansion slots. This is one thing to double-check when selecting a chassis and motherboard combination.

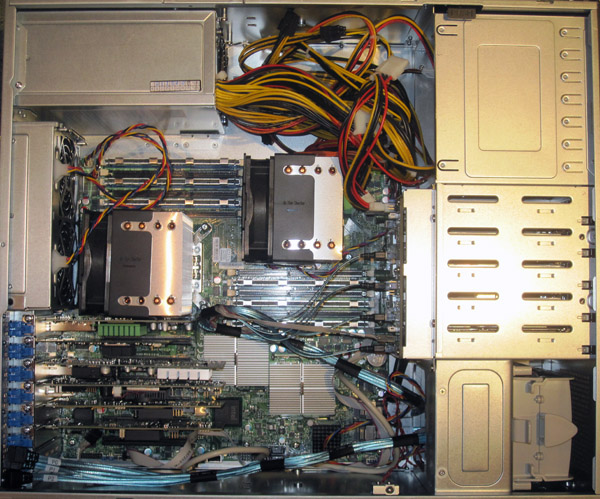

I also was thinking of building a 2U box, but I wanted enough room to house full-height cards necessitating either a 3U or 4U chassis. The choice came down to a spare Norco RPC-470 or a Supermicro SC842TQ-665B that will be reviewed on ServeTheHome shortly. I ended up using the SC842TQ-665B because the included power supply had dual 8-pin power connectors.

Airflow is extremely important for a few reasons. First, inadequate airflow can cause overheating and system stability issues. Second, and often forgotten, is that when a system heats up from inadequate airflow, most servers spin fans faster to compensate. This generates additional noise from the higher fan RPMs but also increases the wear on fans and power consumption. Faster revolutions require more power to spin the fan and create more stress on the bearings and fan motor. The bottom line is, picking a case with excellent airflow should be a key configuration consideration.

Power Supply

Unlike standard UP setups, DP systems today commonly have pairs of 80w or 130w CPUs. That means under heavy processing load, they will use more power than UP systems. Add to this a myriad of expansion cards (8-20w each if storage controllers), potentially dual IOH36 (24w each), cooling fans, and drives, and using a sub-500w PSU is out of the question. For 20+ drive dual CPU systems, larger power supplies are of course required. The system being built will house two 80w CPUs and connect to a majority of drives through SFF-8088 external cables so the 665w PSU in the SC842TQ-665B was more than sufficient.

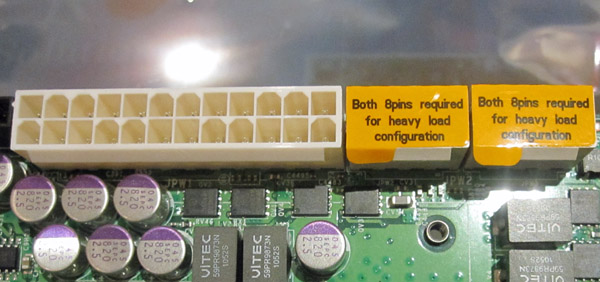

One important note to those not familiar with multi-processor systems is that many consumer PSUs are not going to be suitable for use with multi-CPU setups because they have only one four or eight-pin PSU connector. Dual-CPU motherboards generally require dual 8-pin CPU power connectors so users looking to build a DP system, or looking at potentially upgrading in the future from a UP system should look for a server PSU (versus a consumer PSU) with dual 8-pin connectors even if redundancy is not required. This saves a lot of troubleshooting headaches or the need to re-wire a setup.

Installation

First, I generally suggest taking a view of the case’s features. Typical server motherboards and cases have front panel connectors that have fewer USB, firewire, and audio ports and instead optimize for things like NIC activity LEDs and overheat lights. Beyond this, making sure that one plans which cables are needed is an important step to reduce the need for later re-work.

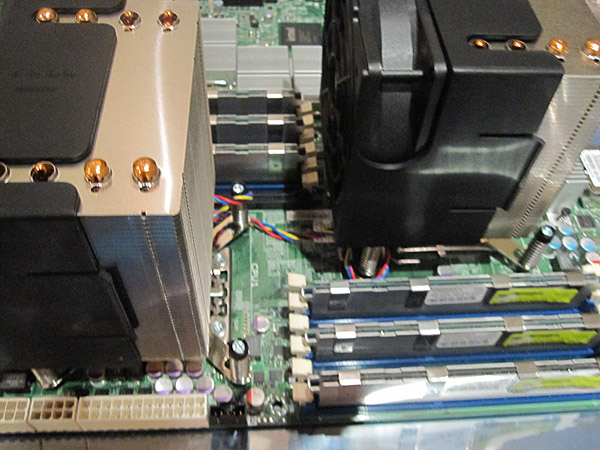

Depending on the type of cooler used, and motherboard mounting points, one may opt to install processors in sockets, heat sink mounting hardware, and heat sink assemblies prior to installing the motherboard in the chassis. If one does install heat sinks outside of the chassis, it is important to remember which direction air will be flowing and align the heat sinks and (if required) fans to the server case’s airflow design. Normally a server chassis will draw air from the front of the chassis and exhaust air throughout the rear panel. Many consumer cases have different exhaust points so there is not straight uni-directional airflow design.

Next it is time to install the motherboard. I generally test fit the motherboard and add the motherboard mounting points accordingly. After all components are installed, it becomes much more difficult to add or move a mounting point. Furthermore, due to the sheer size of many DP motherboards (often Extended ATX or EATX) and amount of installed components, it just makes sense to take one’s time test fitting everything.

After the motherboard is installed, I generally connect the front panel connectors. Sometimes these are difficult to connect after everything else is wired.

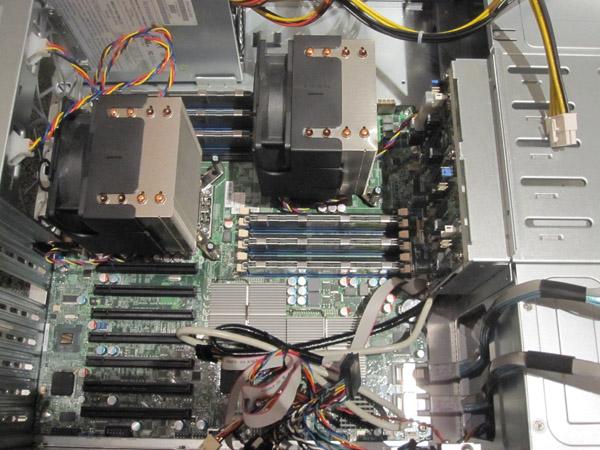

Next I tend to install add-in cards such as additional NICs and storage controllers.

After this is complete, it is time to wire drives. Be sure to use the correct cables for the backplanes and any external enclosures. See the SAS/ SATA Connector Guide for an annotated pictorial guide. One note, I do prefer to install drives into hot-swap enclosures, then to install a server into a rack, and finally installing drives as a very last step. Hard drives are susceptible to premature failure due to shock more than almost anything else in a system so it makes sense to load these at the last moment. If not using hot-swap bays, this is much more difficult because the power and data cables will need to be connected prior to closing the enclosure.

I next like to wire up all fans. Ideally, the enclosure includes 4-pin fans and the motherboard includes enough 4-pin connectors for the fans in the chassis. This allows the chassis to spin up and down fans in response to heat generation.

Finally, connect the ATX 24-pin connector and the two 8-pin CPU connectors and any PCIe power connectors as necessary (discrete GPUs are often included in servers doing GPU compute tasks.) I generally do this last as these are the largest cables.

In the event you were wondering, the Supermicro X8DTH-6F has two onboard Intel gigabit NICs and an onboard LSI SAS2008 controller. In the slots are a dual port Fibre 10Gb Ethernet card, a dual port Mellanox Infiniband card, a single port (copper) Intel 10GbE card, an Areca 1680LP, a LSI 9211-8i, and a IBM ServeRAID M1015. That is a lot of connectivity in a single 4U box all made possible by the dual IOH36’s massive number of PICe lanes. This is a fairly decent test rig for the time being.

One last consideration that needs to be taken into account with the above is cable management. Poorly placed cables can have two potential issues. First, they can impede proper airflow, causing temperatures to remain higher than they otherwise would. Second, and in really bad cases, a poorly placed cable can migrate over time into the path of fan blades causing damage to the wire. On this site, I would generally give myself a D or an F in terms of cable management in pictures. The simple reason here is that I change hardware weekly for reviews so doing a very neat cable management job is impractical.

Conclusion

Building a dual processor server does require some forethought as power requirements and airflow requirements are generally higher. On the other hand, dual CPU systems are not necessarily harder to build than single CPU servers, but I would not recommend that anyone try to build a DP server for their first build. Dual processor platforms do allow for lots of processing power, RAM, and connectivity which is desired in many applications.

What tower coolers are you using? Also, some of the higher wattage consumer power supplies have dual 8-pin CPU power. So much expandability and better air flow makes me rethink my usage of a Z8NA-D6C board for my DP computer.

Very true on some high-end PSU’s. On the other hand, those are a bit harder to find.

The heatsink/ fan units are SNK-P0040AP4 that I bought off Amazon.

I will have a X8DTH-6F review up next week sometime.

Hey Patrick, spotted a couple of things. The top picture has the CPU HSF’s setup in push forcing airflow through the HS to rear of the case but then in further images, you either have the fans in traction mode or the wrong way around???

Also, on PSU’s; a stiff word of warning for those looking at using consumer ATX style PSU’s, avoid those that have large 120 or 140mm fans in the side. These are in just about every case, too slow and weak to pull airflow when in even the most weakest vacuums found in just about ever rack case. Always aim for PSU’s that have a smaller and faster 80mm fan in the front of the PSU case that can achieve a better traction effort. I actually go to the point of modding my PSU’s to run external fan feeds (fed off the MB and can be speed controlled) or bypassing manufacturers fan controllers inside the unit. Too many made to be quiet rather then running cool.

Another area missed a little is consideration to cooling of the DIMM’s, I have seen many a server built by others that has DIMM’s running at stupid temps wondering why systems are unstable or failing.

Hi i looking the same mistake. Air flow chances between first img and four. Some response??

Thanks

A bit of an old post (almost 5 years old now) but I believe the first picture is a stock picture. The subsequent pictures are of the actual build and the fan direction is pulling airflow through the heatsinks.