The YuanLey AQC113-X1 10Gbase-T is a relatively inexpensive PCIe NIC. Since we have been reviewing so many more switches for our Ultimate Cheap 10GbE Switch Buyers Guide, we thought we would look at a few NICs as well. This one was cheap, so we bought it. As the name suggests, this is a Marvell Aquantia AQC113 NIC.

If you are looking for one of these cards, here is an Amazon affiliate link to where we purchased ours.

YuanLey AQC113-X1 10Gbase-T PCIe Network Card Overview

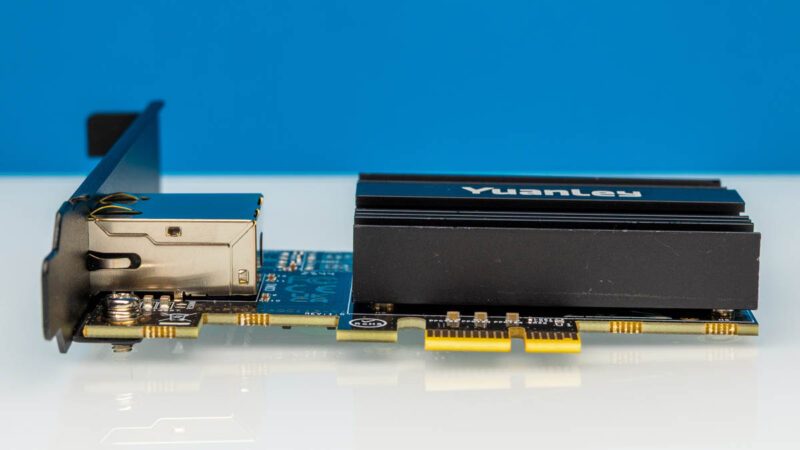

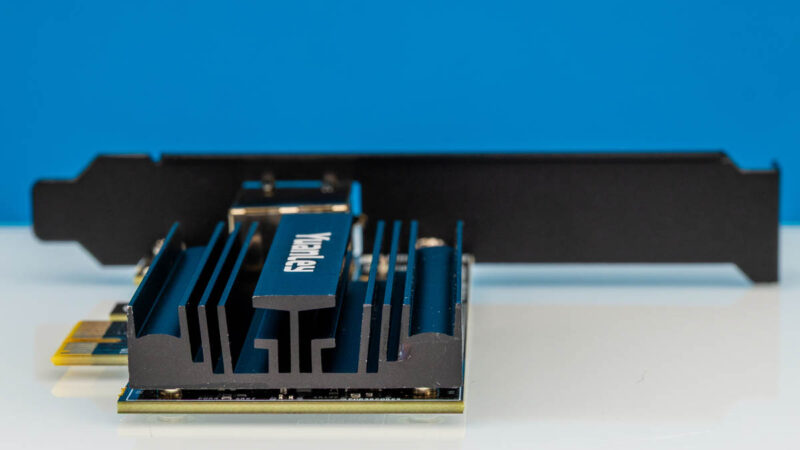

The card is relatively small and is designed to fit almost anywhere.

It has a single port and comes with both full-height and low-profile brackets.

Since this is a Marvell AQC113-based card, we can get in a PCIe Gen4 x1 slot. We have seen older designs that were x4 so that they could use Gen3, but the disadvantage is if you have a Gen4 x1 slot, a x4 card often will not fit.

The heatsink is relatively huge. This card is something like 3.5-5W of power consumption, so this is more than ample for that low power consumption.

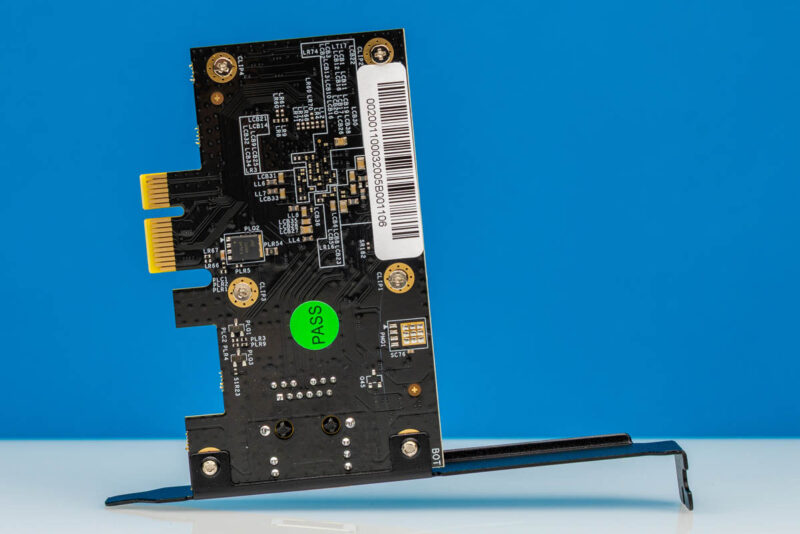

Here is the back of the card.

Honestly, this is a very simple device, and perhaps that is the point at under $55.

Performance

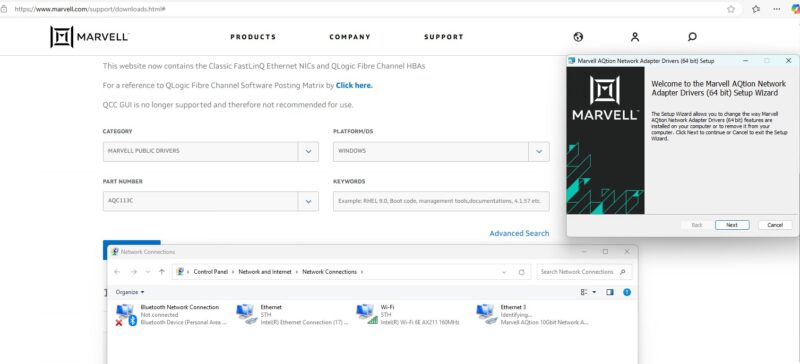

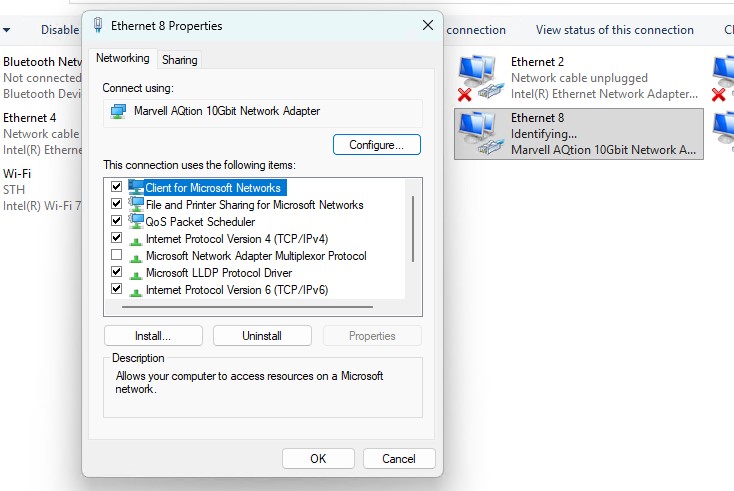

Plugging the card in, to be clear, the Marvell AQC113 has been out for some time. As a result, drivers are usually built-in these days. If not, then you might have to download drivers.

Still, once it is installed, you will have a Marvell AQtion 10Gbit network adapter.

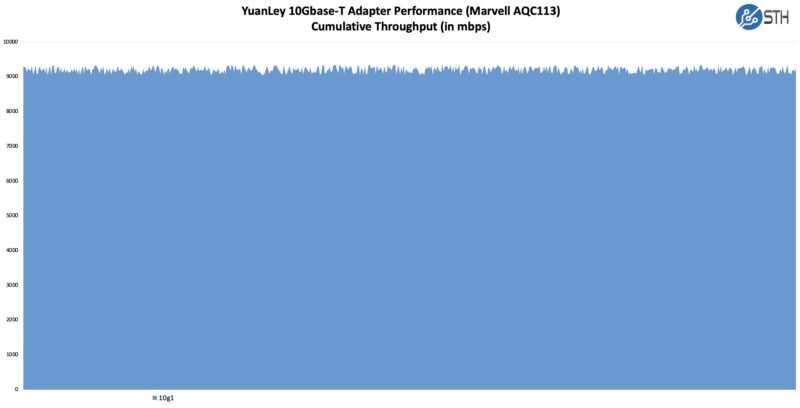

We are using our simple iperf3 setup to test these:

Overall, we got 9-10Gbps from this card, which is about what we would expect in this test setup.

Final Words

This is really going to do battle with the Realtek RTL8127 cards for bringing 10Gbase-T ports to more systems. YuanLey has a small Marvell AQC113 card. We did not grab a photo of this, but we received a low-profile bracket with ours that you can see in the listings. For under $55, this is a decent NIC.

This NIC was an emergency purchase when we needed a 10Gbase-T NIC quickly. There is still a gap in both capability, but also cost and power consumption of this versus data center 10Gbase-T NICs. Another thought that is worthwhile these days is that if it is only $20 or so to upgrade from a 5GbE adapter to this or $35 from a 2.5GbE NIC to this, then that offers more flexibility for the future.

Where to Buy

Here is an Amazon affiliate link to where we purchased ours.

FWIW: The Marvell Aquantia AQC113 is a 6 speed chip. 10/100/1/2.5/5/10G speed. Its support CAT5e for all speeds except 10GbE where they recommend CAT6.

NetBSD has supported it with the “aq” driver for 2 years. The Marvel drivers for FreeBSD or 7 years old and do not work in the later versions.

A few have taken a stab at it:

https://bugs.freebsd.org/bugzilla/show_bug.cgi?id=282805

But it is a common refrain from pfSense and OPNsense users about lack of in kernel supoport.

“ There is still a gap in both capability, but also cost and power consumption of this versus data center 10Gbase-T NICs. “

Can you please expand on this so that we can better understand the trade offs between this and a data center NIC?

Got a pair from Amazon UK, yes dinky little things and worked well as general purpose LAN cards.

I planned to use it as my WAN/LAN bridge 10G connection and worked fine, until it hits a wall of steady 10G worth of data, it stutters and can’t maintain constant speed.

Sent them back and replaced with an ex-corporate Dell X550-T2L off eBay for half the price, no issues, just works.

I would love to see a comparison somewhere of the various options we have for both SFP+ and RJ45. What form factors, power consumption, PCI options, vendors, cost, driver support and so on. TBH, performance is the least interesting metric. I just assume they all perform the same unless someone says otherwise.

@Dan Wolfe – one trade off I see is that these controllers do not support RDMA over Ethernet, which is something one would expect to have in a datacenter setting. RDMA over Ethernet underpins such things as NFS over RDMA (very fast POSIX file ops), direct transfers between VRAM in GPUs in different hosts (much faster data transfer than if the CPU mediates), direct memory transfers from NVMe SSDs in one host to GPU VRAM in another host (much faster data transfer than if the CPU mediates).

@Dan Wolfe – another trade off is that these are single port cards. If they were dual port then you could use them with redundant Ethernet switches. This will improve availability if a switch dies or loses power, or a cable fails.

Does this NIC have ASPM support? Some other acquantia cards do not support ASPM which prevents the processor from entering low power states. Can you please start including ASPM support information in your nic reviews?