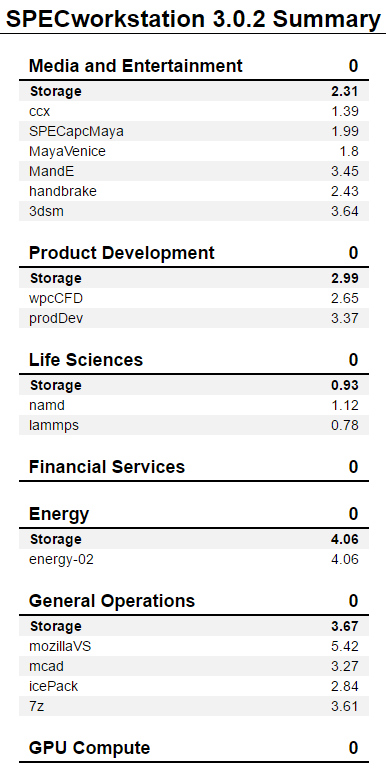

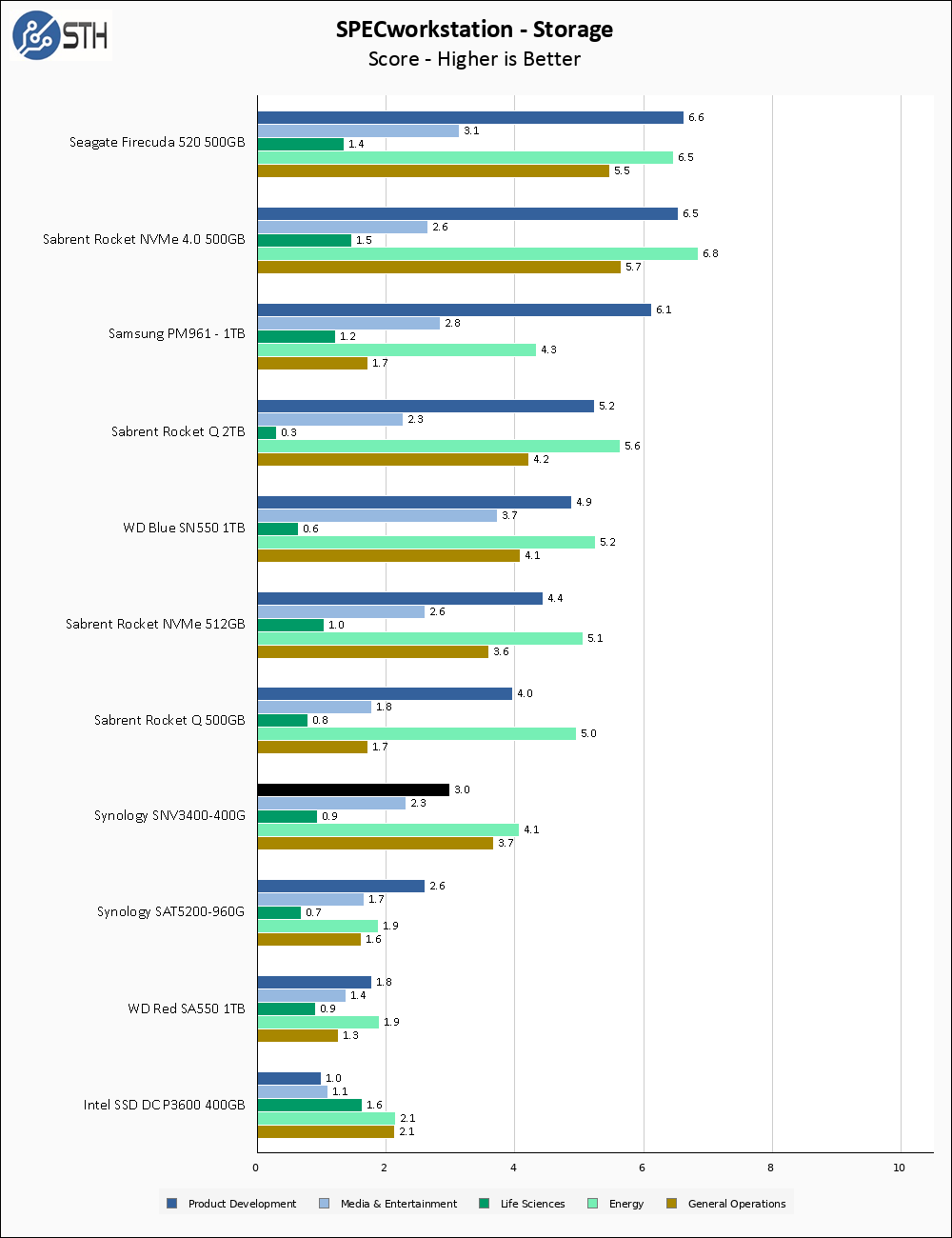

SPECworkstation 3.0.2 Storage Benchmark

SPECworstation benchmark is an excellent benchmark to test systems using workstation type workloads. In this test, we only ran the Storage component, which is 15 separate tests.

The SNV3400-400G performs decently on the SPEC Storage benchmark, though it is certainly nothing to write home about. The drive clearly distinguishes itself from any of our SATA drives but is lost in the pack with the other NVMe units.

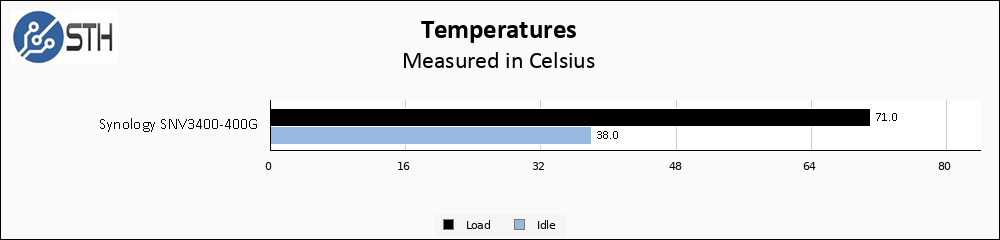

Temperatures

We monitored the idle and maximum temperature during testing with HWMonitor to get some idea of the thermal performance and requirements of the drive. Please keep in mind that our test bench is an open frame chassis in a 22C room, and is thus not representative of a cramped low-airflow case.

The SNV3400-400G runs pretty warm; in fact, it has the second-highest load temperature of any SSD I have personally tested. I would want to ensure decent airflow or install a heatsink if it would fit.

Final Words

The SNV3400-400G is another interesting drive from Synology. Retailing for around $165, it maintains a healthy price premium over either the WD Red SA500 500GB and price parity with the larger and more durable Seagate Ironwolf 510 480GB drive. The SNV3500-400G, with hardware power loss protection onboard and otherwise equal specifications, is a mere $5 more on Amazon. Assuming your M.2 slot can handle the longer 110mm drive, the SNV3500-400G seems like it would be the better purchase.

Compared to the WD Red SA500 drives, the SNV3400-400G is a step up in performance and reliability. The large spare area on the Synology drive should give it better resistance to write amplification drive degradation over the SA500 drive, which comes with a much lower percentage of overprovisioned space. Using the Seagate Ironwolf 510 480GB as a comparison point makes a much harder case for the Synology SNV3400-400G; the Synology maintains a larger spare area than the Seagate drive, but has overall lower performance and rated endurance.

From my perspective, the SNV3400-400G is a harder sell than Synology’s other initial SSD offering, the SAT5200 line. The SAT5200-960G we reviewed was premium in almost every sense, while the SNV3400-400G is a much more ‘normal’ drive. One of the same arguments can still be made for this drive; if you want an all-Synology NAS to concentrate your troubleshooting to a single vendor, then the SNV3400-400G may make some sense. But as things stand today, I would instead vote for the SNV3500-400G and get the power loss protection if it was at all an option.

Would have been nice to see an ms-sql benchmark and to get the size of the PLP protected cache, which could play a big part in SQL/ESX (synchronous IO). Even though it only have 500-650 MB/s write performance, it might be faster then all other drives in a SQL environment?

Cal,

This drive does not have PLP

Ops, my mistake, sorry.

But if it had, would you had investigated the cache size? And it’s performance gain on synchronous I/O (e.g SQL and ESX)?

Why I’m bringing it up it’s because it’s always messing in reviews of PLP drives, even though one could claim it’s one of the most important advantages of PLP supported drives in my opinion, just to conside for future reviews ;-)

It’s something I would like to look at, yes. But thus far I have only reviewed one drive with PLP and it was a SATA drive that was not targeting high performance. If/when I start reviewing more drives targeting that segment, my testing methodology will likely adapt.

Could the relatively poor write performance indicate the controller is not using unused cells as an SLC cache but is writing directly to multi-level cells? This avoids the need for frequent coffee breaks to compress the SLC to MLC, with associated throughput dropouts and reliability risk, since the data isn’t saved in its final form until long after the write cycle is claimed to be complete.

Benchmarking may need to be done with nearly-full drives to check this and other cell reuse cases. Understandably, few testers have time to write fresh multiples of the drive capacity for each test pass; certainly not if you want to publish before the product is EOL!