PCIe has been around for many years and went through a lull between PCIe Gen3’s introduction in the server space with 2011’s Sandy Bridge (Intel Xeon E5-2600 V1) and either 2019’s AMD EPYC 7002 “Rome” or 2021’s Ice Lake (3rd Generation Intel Xeon Scalable.) The roughly decade of PCIe stagnation has given way to more rapid advancements. This week the PCI-SIG started work on PCIe 7.0 or PCIe Gen7.

PCI-SIG Starts Work on PCIe 7.0 for 2025

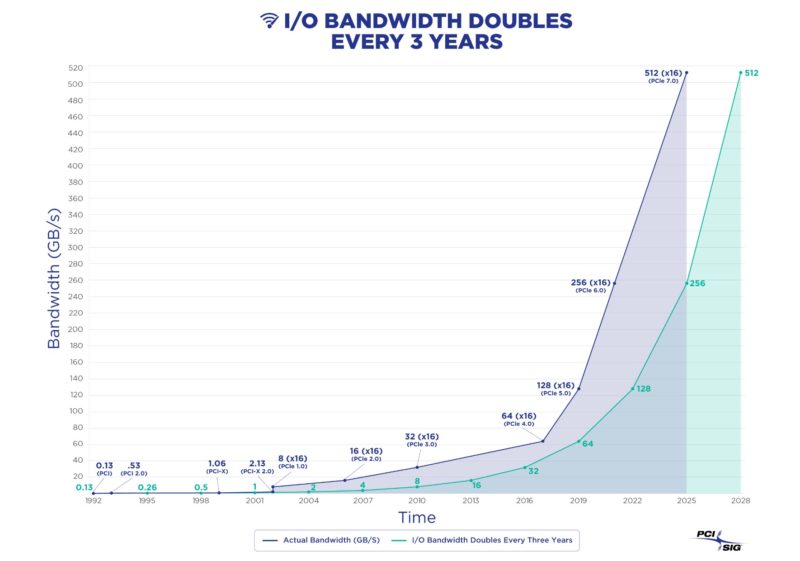

The backdrop of this announcement is that IO bandwidth doubles on a predictable cadence. One can see on the chart below this increase, but also the PCIe Gen3 stagnation from the PCI-SIG not pushing I/O bandwidth for years. Now the PCI-SIG is coming back with a vengeance and there are a few new big features planned for PCIe 7.0 set to be released in 2025 (chips usually follow specifications.) Two of the biggest features are PAM4 signaling and backward compatibility.

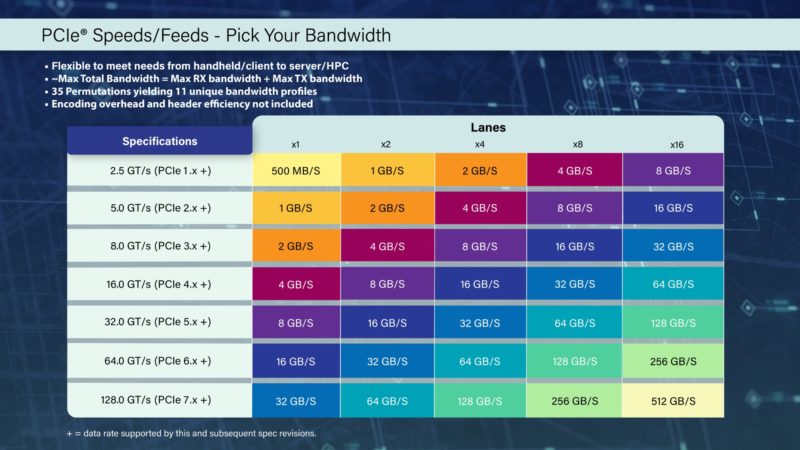

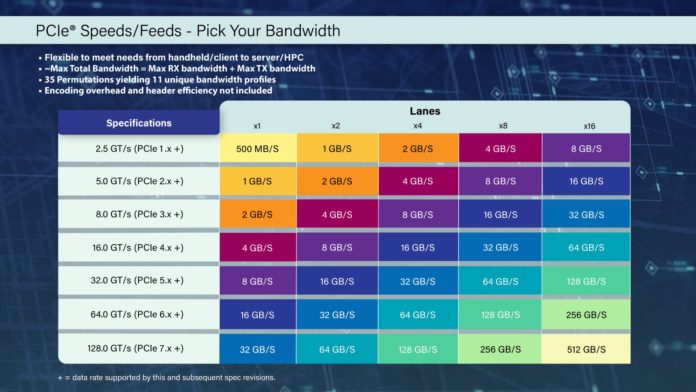

The PCI-SIG released this handy table that shows bandwidth, excluding overheads, of different PCIe lane and generation combinations. While today’s servers can have up to 64GB/s on a PCIe Gen4 x16 connection, later in 2022 and into 2023 we will see PCIe Gen5 x16 at 128GB/s. With Gen7, we get 512GB/s on a x16. That allows for massive networking, storage, and perhaps more importantly, faster chip-to-chip accelerator interconnects.

For servers, there are some huge implications. This will help to further disaggregate servers as the bandwidth between endpoints increases. We showed the Intel IPU and NVIDIA BlueField-2 DPU doing network storage, but in future generations, the pipes get much larger. Future CPUs will have hundreds of cores in just a few years, so components like network adapters will need to scale to deliver network and storage on the same bandwidth per-core basis.

Final Words

We are still many years from seeing PCIe Gen7 devices and CPUs since we are still awaiting PCIe Gen5’s introduction. There are some important aspects to keep in mind though. PCIe Gen7 will increase costs to deliver the signaling in terms of materials and likely power. Even if power per bit goes down, total power will likely rise. That will mean that high-end systems will cost more to purchase and operate than they do today, and we expect this is not by a small margin. As a counterbalance, the density of future servers must increase as well as the utilization of resources like memory and storage. Density and utilization will likely lead the charge to mitigate the higher cost of higher-performance systems. STH has a big focus on DPUs, because those will be key components of future servers to help drive future operating models towards these goals. That all starts with the PCI-SIG completing work on new specifications, like PCIe 7.0.

Cliff, Picture have huge mistage and whole article is… wrong :/

PCIe 1.0 x1 is 0.25GB/s not 500MB/s.

Whote table is wrong. PCIE 4.0 x16 server can do 32GB/s not 64…

Lol it’s RX+TX, ok its correct :) sorry hard to see on mobile

Yeah, it is misleading to show full duplex summed up speed, Speed of Gigabit ethernet is usually not shown as 2Gbit/s

I read somewhere their goal is to replace CPU to main memory interface to PCIe. PCIe Gen7 has a potential do do it.

well DDR is a parallel interface, and SerDes would probably add too much latency, so it will compete with the PCIe 5-based CXL 2, which can do disaggregated memory, like DAS.