The Lenovo System x3650 M5 is a workhorse 2U server platform that can be customized for just about any general purpose workload. Over the course of testing the x3650 M5 the system was a breeze to install and service. It ran fast, cool, and with relatively low power consumption. Where the server can shine is the ability to be customized into a variety of different form factors with the same base unit.

Test Configuration

Our test configuration utilized a fairly common configuration:

- Server Platform: Lenovo x3650 M5 2U

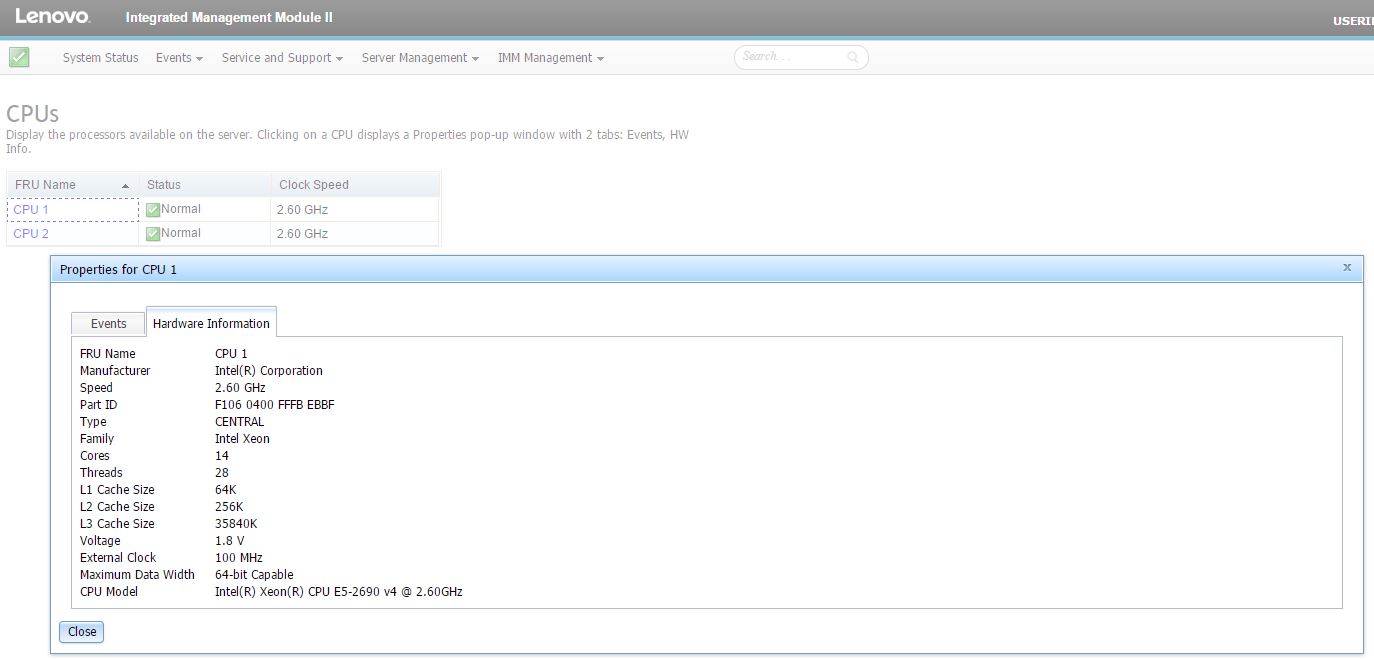

- CPUs: 2x Intel Xeon E5-2690 V4

- RAM: 128GB DDR4 RDIMMs (8 * 16GB)

- SAS SSD: 2x HGST 400GB SAS3 SSDs

- SAS Controller: Lenovo ServeRAID M5210

- Add-on Network Card Tested: Mellanox ConnectX-3 Pro EN (dual 40GbE)

- Operating Systems: Ubuntu Server 14.04 LTS, 16.04 LTS, CentOS 7.3, VMware ESXi 6.5

The Intel Xeon E5-2690 V4 are high-end general purpose compute CPUs that each have 14 cores / 28 threads. With architectural improvements, one can essentially replace two Intel Xeon E5-2690 V1 generation systems with a Lenovo x3650 M5 2U with two of these CPUs. Lenovo has a wide range of additional CPU options including both higher and lower-end parts.

Lenovo has a giant catalog of customization options for the server, too many for us to test. We suggest checking options or asking your sales rep if this server can be configured exactly as you need. In turn, we are going to make a few suggestions on configurations as we go through the review.

Meet the Lenovo System x3650 M5 2U Server

The Lenovo System x3650 M5 is a full sized 2U server. The front of the chassis can accept 16x 2.5″ front access bays (our review unit was configured with 4) and has a full complement of data center I/O for serviceability. There are also 24x 2.5″ and 12x 3.5″ front hot swap bay options.

In addition to the front drive bays, the rear is highly customizable. One has multiple I/O configurations both for PCIe cards (including GPUs) as well as rear storage.

The first impression of a server, actually racking the unit, was excellent. Lenovo’s tool-less rails are very easy to install. The total time to rack both the Lenovo x3650 M5 was under 10 minutes from loading dock to powered on in the rack.

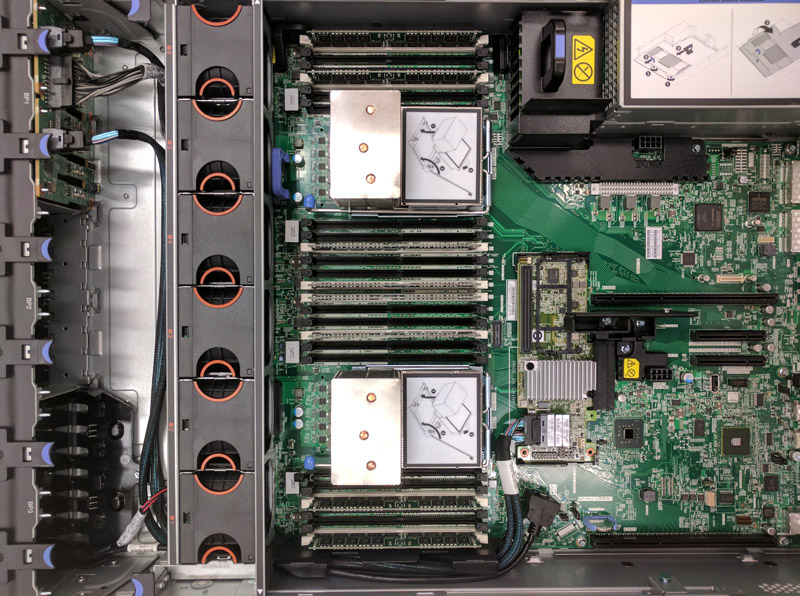

Inside the chassis, we can see tool-less servicing, great labeling, and a high-level of expandability built-in. Airflow is front to back cooling the CPUs first and then the add-on cards. You can see that there are spacers and large PCIe slots for horizontal PCIe card risers.

The Lenovo System x3650 M5 can handle two Intel Xeon E5-2600 V3/ V4 CPUs. The ability to run three DIMMs per channel with four channels per CPU (24 DDR4 DIMMs total) means that you can run up to 1.5TB of RAM in the server. Many commodity servers sacrifice RAM capacity and can only house 8-16 DDR4 DIMMs so this is great from Lenovo.

Lenovo also has a storage expansion slot internally which does not use one of the rear I/O PCIe slots. This allows Lenovo to offer SAS3 to the front drive bays while not utilizing a valuable rear I/O PCIe slot. This increases the flexibility and density of the server. This is a great design feature.

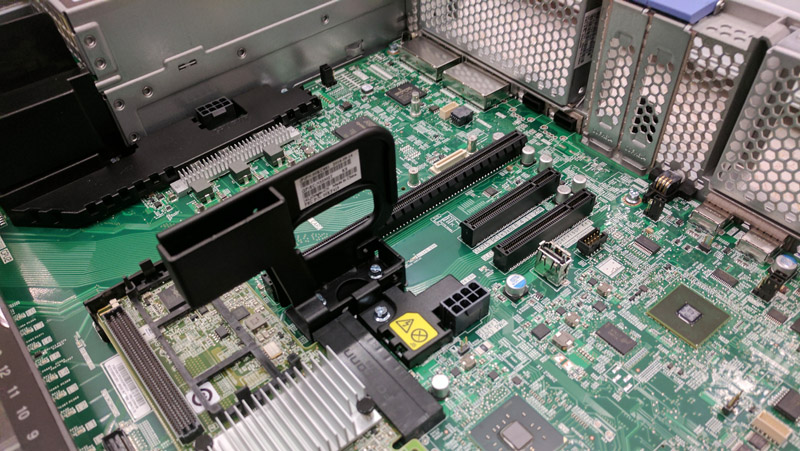

Lenovo can handle myriad of PCIe expansion scenarios and includes two PCIe 3.0 x8 low profile slots as a default. Beyond this, Lenovo has different risers and options to add horizontally mounted PCIe slots as well as rear drive bays. Our test server did not include these features but you can see where they fit into the overall system. This flexibility allows enterprises to utilize a single base server for servicing multiple scenarios.

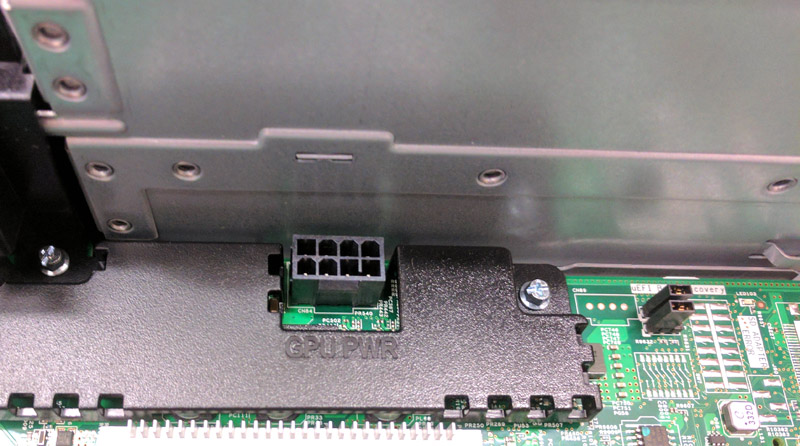

While there are great features like the internal USB Type-A header, there are also smaller features we wanted to point out. One of them is that there are two GPU power connectors in the chassis. We are seeing GPU compute (and addon cards that require power) as a major trend in the industry. It is great to see this support in a mainstream platform:

Another small but nice touch is the clear labeling. Some vendors have motherboards that look like seas of white text and lines. The Lenovo labeling was clear. One example of this to the right of the GPU connector, you can see a UEFI Recovery jumper that is dark text with a white block around it, making this extremely easy to read even in low light conditions found in data centers.

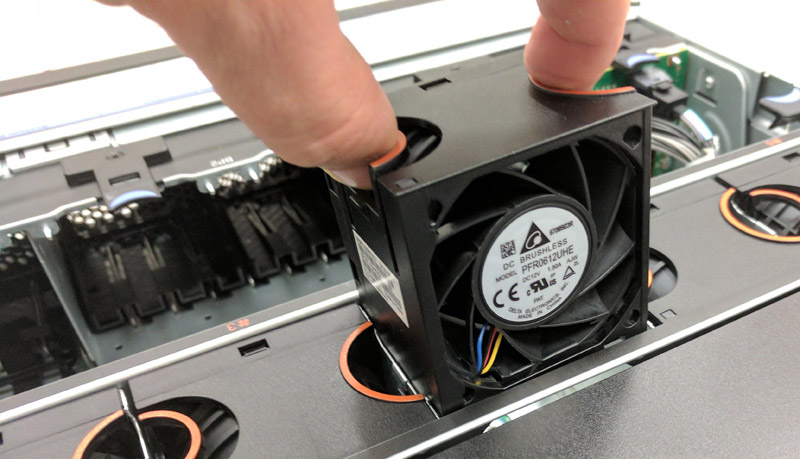

Servicing fans is extremely simple. The Delta units in our test system were easy to replace without tools and with one hand. If you service many systems, small features like these matter.

Our test system had a single PSU. We wanted to note that Lenovo is using 80Plus Platinum rated PSUs.

These higher efficiency PSUs lower operational costs by providing more efficient power to the system. In some circumstances, these can allow an extra system to be racked in a given per-rack power budget.

Lenovo System x3650 M5 Performance

We were able to run our standard benchmarks on the Lenovo x3550 M5. If you have a Ubuntu 14.04 LTS LiveCD, you can run a direct comparison to the Lenovo x3550 M5 following the four steps in the Linux-Bench how-to. Lenovo has a number of CPU options ranging from single CPU to dual CPU configurations so this is simply a snapshot of one of those options.

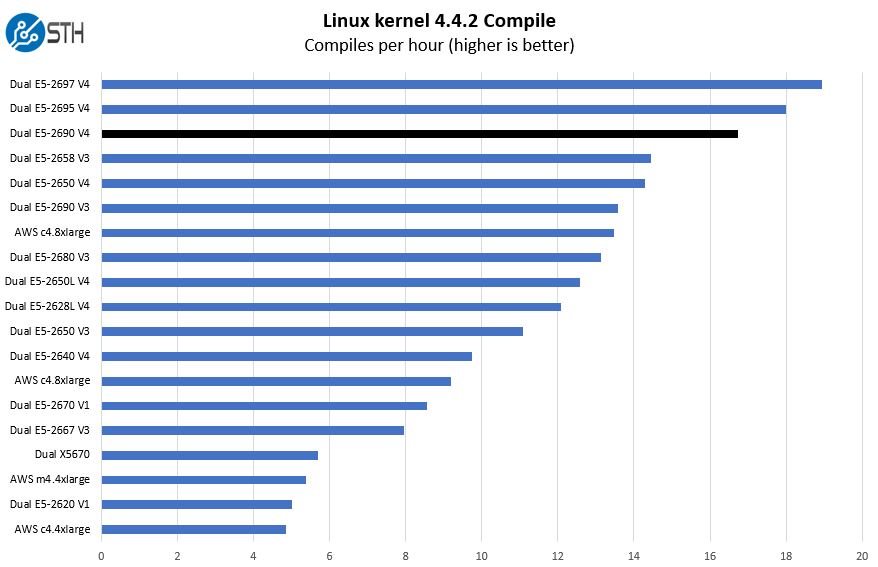

Python Linux 4.4.2 Kernel Compile Benchmark

This is one of the most requested benchmarks for STH over the past few years. We (finally) have a Linux kernel compile benchmark script that is consistent. Expect to see this functionality migrate into Linux-Bench soon (we are just awaiting the parser work on it.) The task was simple, we have a standard configuration file, the Linux 4.4.2 kernel from kernel.org, and make using every thread in the system. We are expressing results in terms of complies per hour to make the results easier to read.

As you can see, the Intel Xeon E5-2667 V4 provides very good performance for an 8 core part. The higher clock speed and large cache both help.

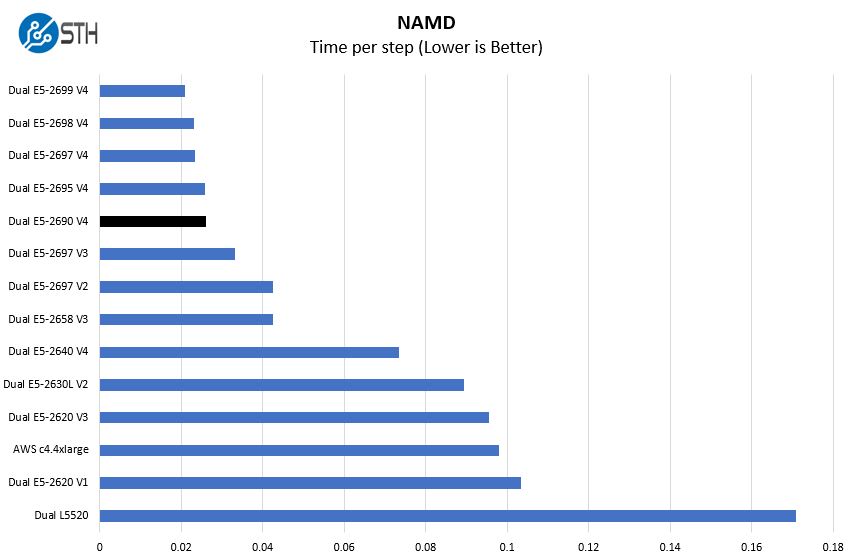

NAMD Performance

NAMD is a molecular modeling benchmark developed by the Theoretical and Computational Biophysics Group in the Beckman Institute for Advanced Science and Technology at the University of Illinois at Urbana-Champaign. More information on the benchmark can be found here. We may replace this or augment with GROMACS in the next-generation Linux-Bench as that test is currently running through regressions.

The key here is that these chips have a lot of performance. The test we started using with the Intel Xeon E5 V1 generation is starting to run into scaling walls with the Intel Xeon E5-2690 V4 generation.

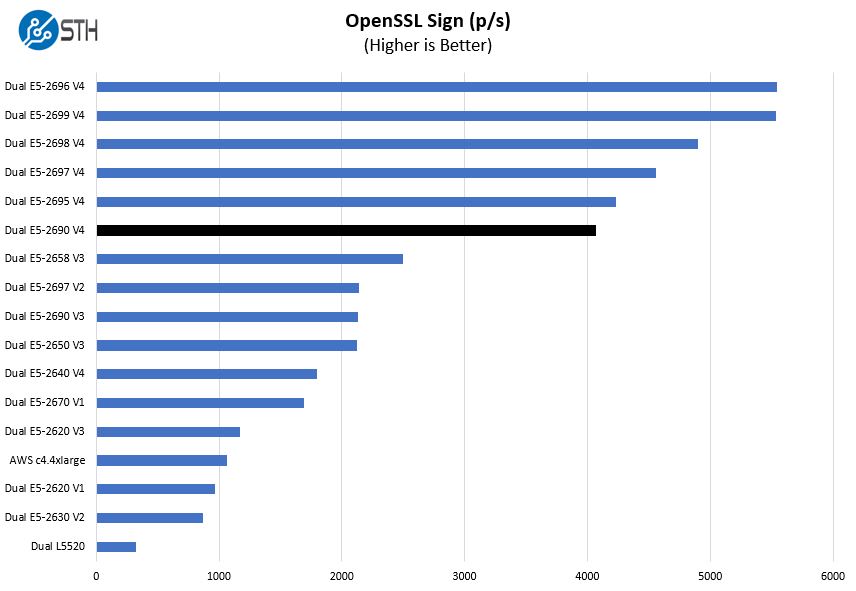

OpenSSL Performance

OpenSSL is widely used to secure communications between servers. This is an important protocol in many server stacks. We first look at our sign tests:

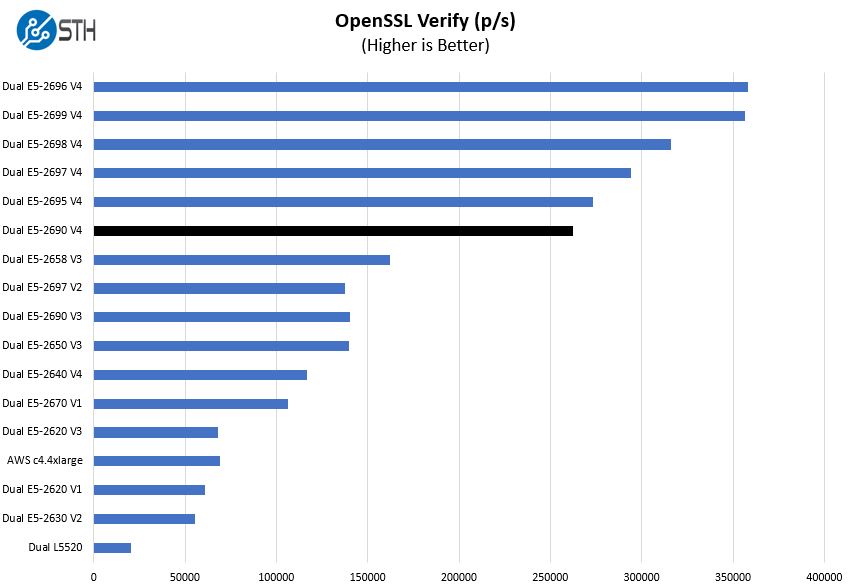

Here are the verify results:

As a foundational technology underlying many VPNs, web applications and other network related tasks, the OpenSSL speeds of the V4 processors is awesome.

Networking

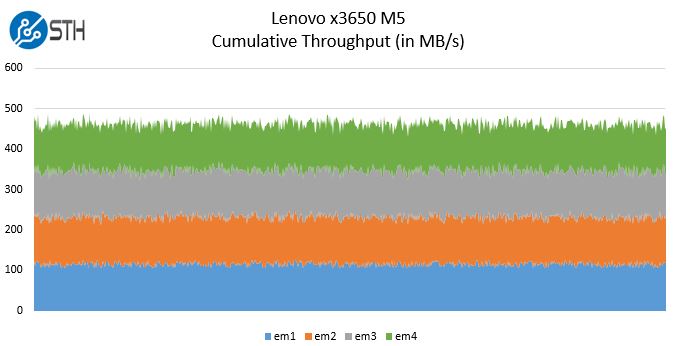

Regarding networking performance the four onboard Broadcom NetXtreme 1Gbase-T ports and performed as expected using iperf3:

We suspect that many Lenovo server buyers will opt for one of their higher-end 10GbE or faster networking options.

Power Consumption

We used our calibrated Schneider Electric APC PDUs to measure the power consumption of the server. In the STH data center lab, we utilize a 208V circuit which is common in North American data centers.

- Idle Power Consumption: 79w

- 70% Load Power Consumption: 278w

- Maximum Observed Power Consumption: 384w

The key here is that this configuration can handle more drives and high-speed networking and still maintain a sub 1A power profile on a 208V circuit.

Lenovo Management

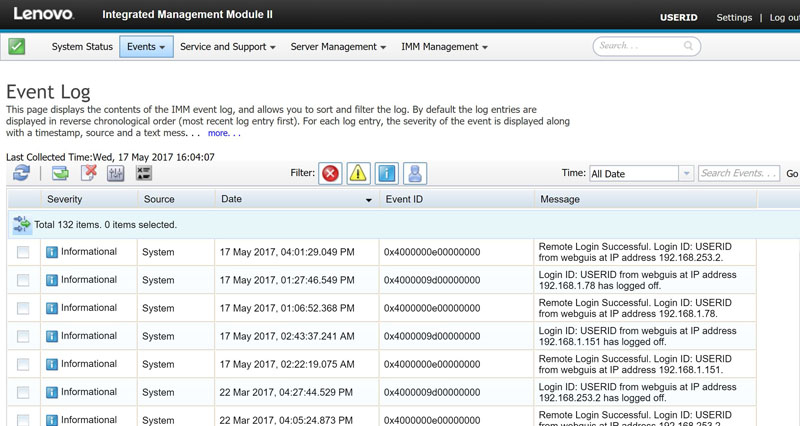

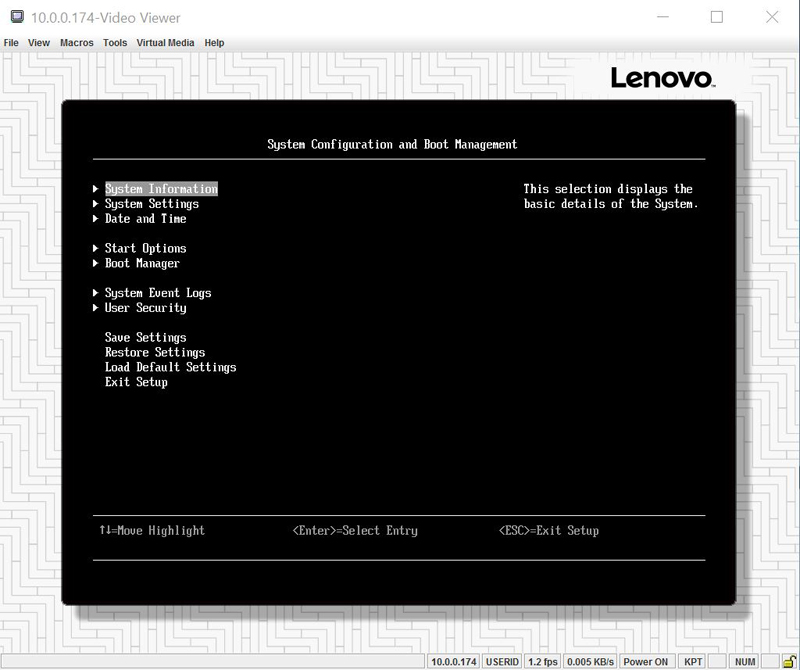

Lenovo Integrated Management Module II (IMM) is this generation’s Lenovo management interface. IMM provides services from monitoring to hardware inventory, to iKVM access (with an upgrade key.) These management interfaces have become an industry standard and Lenovo has what we would consider a higher-echelon implementation, far from the bare-bones implementations we see used by other vendors.

The IMM default login on our test system is:

- Username: USERID

- Password: PASSW0RD (note the zero)

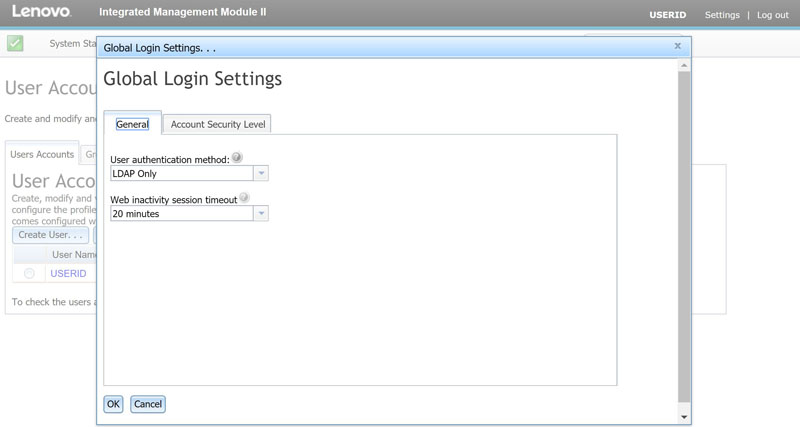

We highly suggest changing the default administrative logins immediately. IMM also includes the ability to integrate with directory services such as LDAP for authentication:

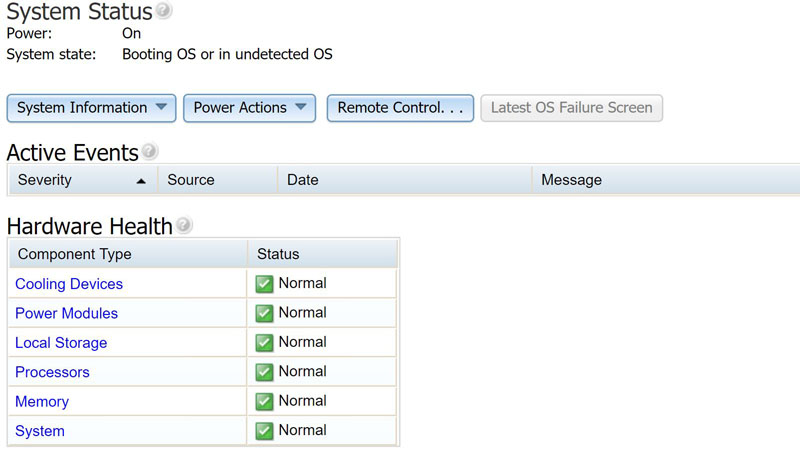

Lenovo also has the ability to provide hardware inventory information using IMM II.

As with most solutions, the Lenovo IMM can alert on a system component failure and is configurable to send notifications both to administrators but also Lenovo service.

Standard features such as power on/ off/ reset are available in the base IMM with upgrades enabling remote iKVM.

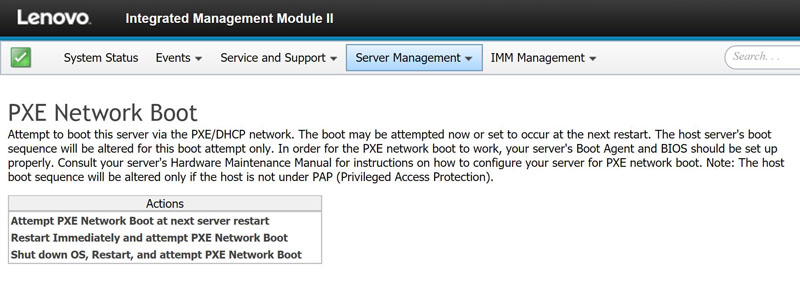

A handy feature that Lenovo has is the ability to force a PXE boot using the web management interface.

Many management interfaces from other vendors would require entering either the BIOS or a keystroke boot menu to force a PXE boot. This is one example of the value-add differentiation of the IMM solution.

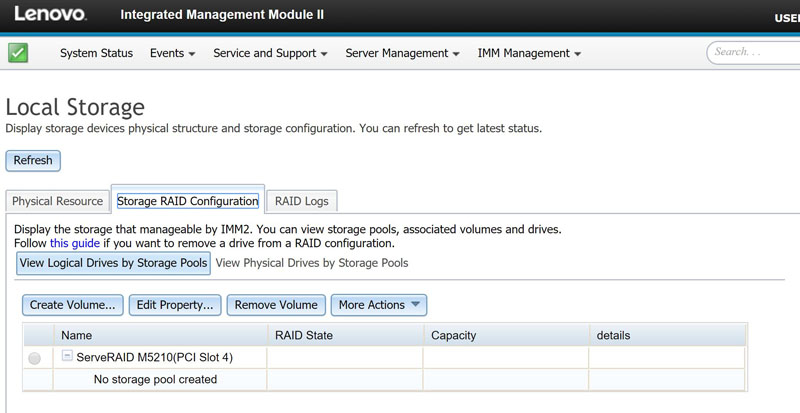

Another higher function ability that IMM has is the ability to manage storage configurations.

Here we can see the Lenovo ServeRAID M5210 add-on card that is installed in our test server. You can set RAID configurations from the management interface and do not need to go into another tool or the firmware to edit these.

With the IMM upgrade, you can get remote KVM functionality which allows for complete remote administration including remote media.

The one area we would have liked to see Lenovo IMM improve is in terms of speed. The interface is slightly slower to load than some other options. Generally, the BMC SoCs are based on low-power ARM chips and are meant to be accessed infrequently. We do hope that this is an area Lenovo looks into in the future.

Final Words

Lenovo has a great server on its hands with the Lenovo System x3650 M5. While we tested one configuration, we could have just as easily tested a new configuration every day for the last year and not have gotten through all of the major variation combinations. Beyond the flexibility, day-to-day operations are helped by clear labeling and remote management. These features ensure downtime is kept to a minimum. Both the performance and power consumption figures from the server were very good. Overall, this is an excellent server platform.