Running OpenClaw or AI Agents on Servers is the Way

Just about every server CPU company, including those trying to enter the space, is saying the supply of server CPUs is becoming a challenge. While running desk-side OpenClaw might sound like a great idea at first – and to be clear, it is both useful and one of the most fun pieces of technology we have used in decades – the reality is that this needs to both run on servers, and it needs a lot more scale. AI agents are proliferating at an enormous rate, making it impossible to order new boxes for OpenClaw, Turnstone, Hermes, or any other framework as fast as many organizations need them. Realistically, running AI agents on a server allows you to deploy OpenClaw en masse, quickly, so long as you have capacity. Also, as the industry evolves, the answer may be a different framework in a few weeks or months. Companies know how to deploy and orchestrate containers and VMs on servers at scale, so this is a very well-understood model we have been talking about for almost the entirety of ServeTheHome’s almost 17-year history at this point.

Running on servers also allows companies to use familiar tools such as container backups, container storage, and virtual machines. It allows security and network policies to be applied to the fleet.

Even small items, like a more reliable network and power, are becoming important aspects of deployments. As AI agents, backed by larger models, have become more useful, they have also become increasingly critical. Folks online saying they are running prediction market bots desk-side will eventually experience a network or power outage, causing a big loss. Just like traditional financial institutions, they will be forced to look to higher-reliability hosting, which will be in data centers and on servers. That will lead to optimizing on latency, running larger compute resources, and so forth, just like large trading firms have been doing for a long time. Beyond the trading example, there is a reason critical business functions operate in environments with higher-reliability ECC memory, faster servers, larger and faster storage, faster networking, and more.

On the topic of servers, something we have running but that is not quite ready to show yet is looking at agentic performance. Where do AI agents, like OpenClaw, run best? Despite what we have seen some folks discuss, it turns out that through a lot of profiling, we are quickly arriving at the CPU side looking like a lot of traditional compute scenarios. The LLM side is completely different. Running the Deepseek-R1 671B Model at FP16 was neat last year, but now we could not imagine that just given how the CPUs are being used in agentic workflows.

We will follow up with a lot more detail on this, but let me give you some high-level principles:

- P-cores tend to win. We have tested a number of Arm and x86 architectures at this point. If you believe that you are optimizing for high-throughput and low-latency, then big P-cores are the answer.

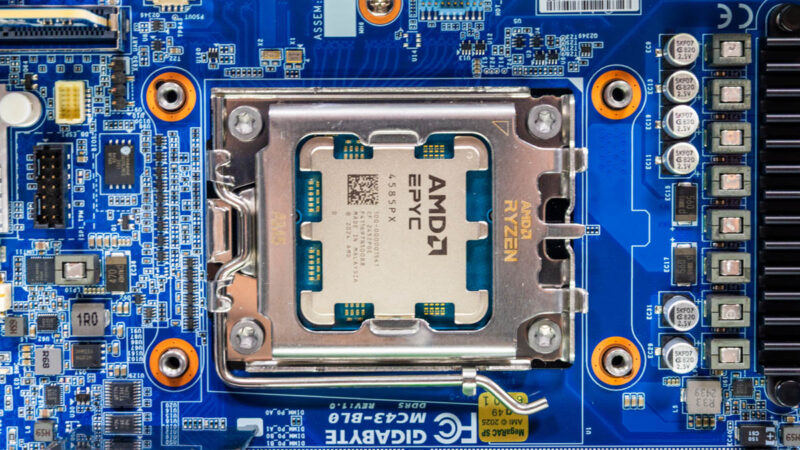

- E-cores tend to allow a higher CPU-to-memory core ratio. That is actually one of the reasons this came up with AMD. We had been testing Zen 5 (Turin) and Zen 5c (Turin Dense) as part of this exercise. Zen 5c tends to give up cache per core, which is often cited, but also usually clock speed over Zen 5. The advantage is that it retains the P-core compute and is clocked higher than E-cores, such as the Intel Xeon 6 6700E series.

- SMT on x86 tends to lead to higher performance in most scenarios. Just like traditional computing, there are cases where SMT is not beneficial. It is not as beneficial as adding another full core, but we have been seeing consistent benefits from it. Usually, the cases we tested that were poor for SMT are ones where there is inter-core/ thread communication that waits for the entire chip to be updated. Having more threads just means you can build larger inter-thread communication networks.

- Running agents on an entire chip is almost silly at this point. We had some strange results early because we were hitting highly serial parts of workloads and watching 127 of 128 cores just sit idle. On a modern server CPU, you want to be running multiple workloads or multiple agents on the same node. We even tested this down to some of the smaller nodes, like with the AMD EPYC 8004 and Intel Xeon 6 SoC, and in most cases, it would be silly to run a single agent instance on those

- Using containers and/ or overprovisioning VM memory is extremely useful. These are basic concepts in server management, but in a world where memory is expensive and in short supply, these can offer a lot of savings.

Beyond performance, being able to run on more reliable infrastructure, having better monitoring, back-ups, and provisioning (we have seen a number of OpenClaw instances that have just been re-dos), putting firewalls, and so forth around the instances has helped a lot. For our readers, this is an opportunity to lead in an emerging field.

Final Words

A big part of this article was really because so many STH readers, folks I run into on a daily basis, friends in my personal life, and so forth have been coming to me asking about OpenClaw. I keep seeing pictures of folks running local models for increasingly business-critical tasks. I still have 40-50% of my conversations where I need to step back and separate the OpenClaw (CPU side) from the LLM (GPU side). Just seeing how useful this technology is, the pace of its adoption, and the relative speed at which it is becoming critical, makes me think back to how computing evolved in the late 1990s and early 2000s, and the parallels to today.

The current market focus on server CPUs is warranted. The industry is moving in this direction. What will push this forward is that we are rapidly heading to an era where agents are talking to other agents. Some have this prototyped at a small scale. Some have been deploying it at larger scale. While there are going to be speed bumps, the ability to scale the work being done by adding compute means that we are in for a period where compute becomes extremely important. That is what is driving the scarcity in compute, memory, and storage resources.

If you have not used OpenClaw or another AI agent platform yet, or have not had the “ah ha!” moment where you have it do something incredibly useful, I would strongly urge you to spend some time on that. The rate of change is amazing, so even if you tried a platform a few months ago, it is worth trying again now.