Scaling Up LPUs: The NVIDIA Groq 3 LPX Rack

While the theoretical background on NVIDIA’s use of LPUs is rooted in single processors, the real-world use of the technology is all about scale. While NVIDIA is now counting the LP30 LPU as one of its seven chips for the Rubin Vera era, NVIDIA did not license Groq’s technology in order to throw a single LPU in a DGX Station or NVL8 server. NVIDIA licensed Groq’s technology to build high-performance rack-scale solutions. So that is exactly where Groq’s LPUs are going: the big leagues.

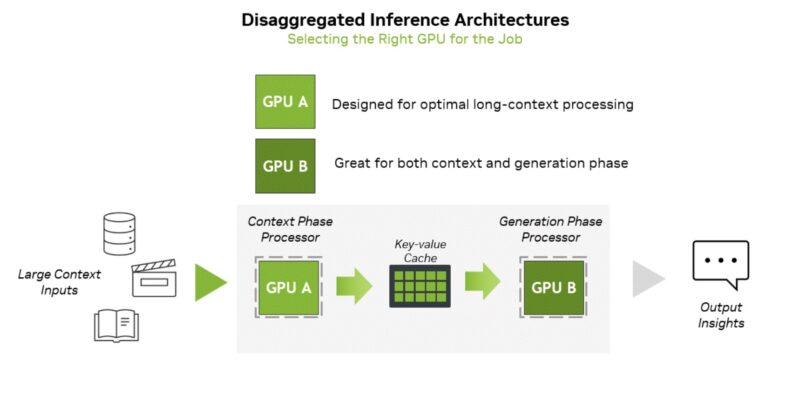

NVIDIA will be offering the NVIDIA Groq 3 LPX as an optional addition to Vera Rubin rackscale configurations. If customers want to build a server cluster that can offer high single-user token rates and low-latency responsiveness, ideal for running agentic AIs that want to quickly chat amongst themselves, they can add some LPX racks to boost performance. NVIDIA is not prescribing a specific ratio of LPX racks to NVL72 racks, but ultimately it is going to depend on how much a customer values low-latency token throughput, and of course, how much they want to spend.

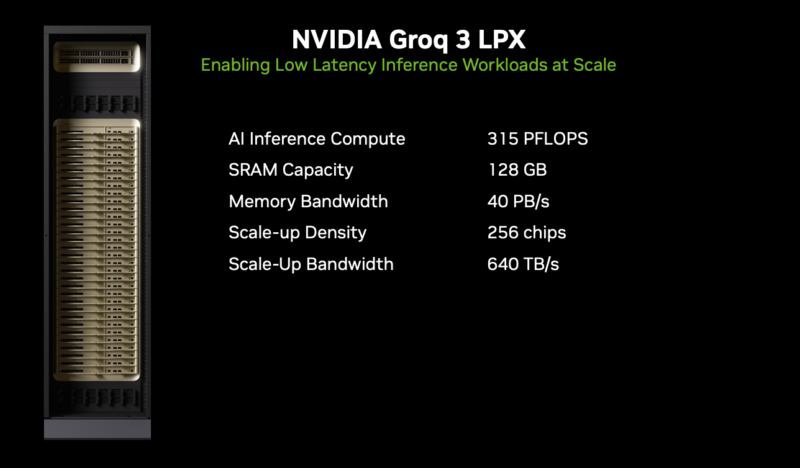

A single LPX rack, in turn, will comprise 256 LPUs, organized into 32 1U trays. This will give the aggregate LPX rack 128GB of SRAM capacity and some 315 PFLOPS of FP8 compute, which is still a rather tiny amount of memory and compute throughput relative to an NVL72 GPU rack, but it is enough to serve as the accelerator that NVIDIA needs. Instead of holding a giant model fully in-memory, the LPX rack can handle being an ultra-fast draft model provider for the Rubin GPUs running larger memory models. Indeed, it is this rackscale implementation of LPUs that even makes this strategy viable to begin with, as otherwise a handful of LPUs would not have nearly enough SRAM between them to store the kind of large models (and large context windows) that are in vogue these days.

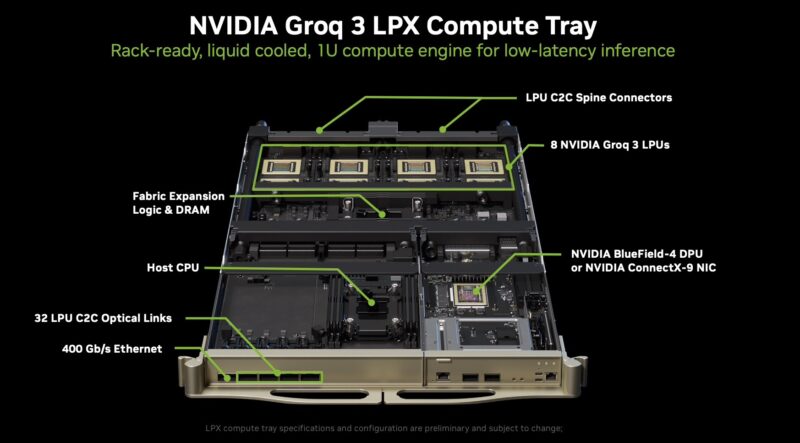

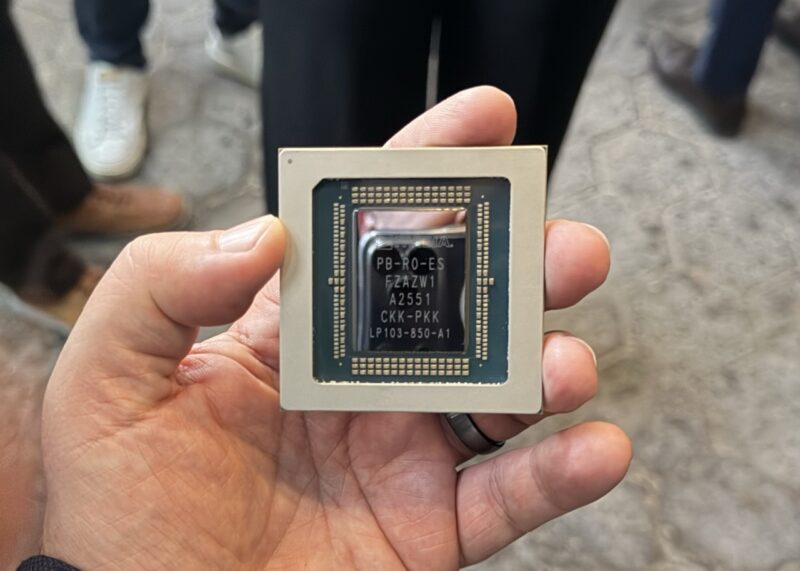

Each compute tray, in turn, is not all that different from an NVL72 compute tray. LPX compute trays house 8 LP30 LPUs, each with chip-to-chip connections to other LPUs within the tray, as well as the C2C spine connectors that link up the trays, allowing for all 256 LPUs to function as a single scale-up domain. Notably, each tray will feature an NVIDIA NIC (either ConnectX-9 or BlueField 4) and a separate host processor. Curiously, NVIDIA has not disclosed what the host CPU is at this time, though they have disclosed that it will have (up to) 128GB of DRAM attached to it. Patrick looked at this photo during the GTC keynote and immediately saw that the host CPU has a retention mechanism only employed by 4th Gen, 5th Gen, and Intel Xeon 6 CPUs.

NVIDIA notes that “LPX compute tray specifications are configuration are preliminary and subject to change,” so we will see what that CPU ends up being since we can only identify the socket retention mechanism. While NVIDIA has been coy about when it started work on integrating Groq’s hardware, Groq used x86 host CPUs in its previous designs, so it would be easiest to keep that as an x86 processor in this generation.

With that said, NVIDIA has confirmed that the LP30 LPUs are being produced by Samsung, with previous announcements from Groq stating that they would be building their future products on Samsung’s SF4X (4nm) node family. This is notable since it means that NVIDIA does not have to spend its precious TSMC wafer allocations on producing LPUs.

A Quick Look at the Future

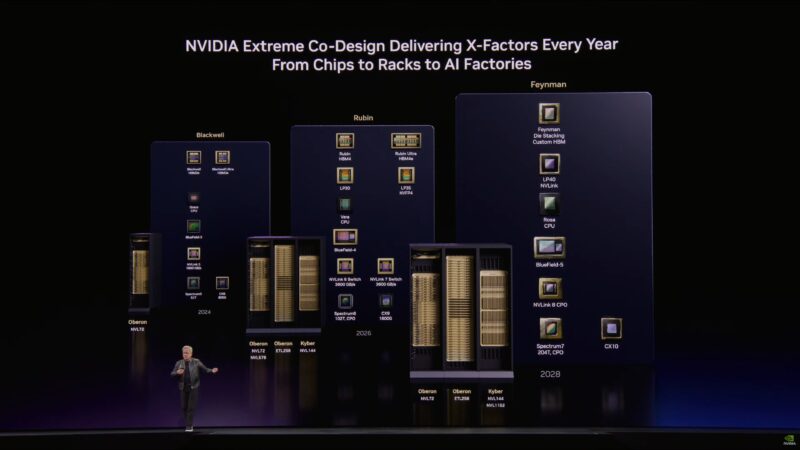

While NVIDIA is using off-the-shelf LPUs for their first generation of LPX racks, LPUs as a whole are not going to be a one-and-done chip at NVIDIA. LPUs have been added to NVIDIA’s long-term roadmap, with the company revealing this week that they are going to be developing/ utilizing two additional generations of LPUs in the next two years.

In 2027, there will be a relatively quick follow-up LPU, the LP35. The quick speed belies the importance of this chip, because its marquee improvement is the addition of support for NVIDIA’s NVFP4 data format. That is NVIDIA’s low-precision format of choice for inference. With LP30 only supporting data types down to FP8, the initial generation of Groq hardware at NVIDIA will leave performance on the table by working with larger data formats than NVIDIA’s GPUs would otherwise support. NVFP4 stands to further reduce the pressure on the relatively small SRAM blocks on these LPUs. In essence, this is bringing many of the same benefits to LPUs that NVFP4 brought to NVIDIA’s GPUs with Blackwell.

That will be followed by LP40 in 2028. The marquee feature here is NVLink support, which would allow LPUs to plug into NVIDIA’s homegrown backhaul technology, rather than using Groq’s current technology. Whether that means using NVLink just to replace Groq’s LPU-to-LPU connections, or going further and using NVLink to directly connect LPUs and GPUs remains to be seen. At the surface, it will be the first generation of the LPU architecture, explicitly designed to better integrate with NVIDIA’s hardware ecosystem.

Adieu to Rubin CPX?

Amidst all of NVIDIA’s focus on LPUs across Vera Rubin racks and architectural roadmaps, there is one subject that NVIDIA has been noticeably silent on: Rubin CPX, NVIDIA’s previously planned solution to the inference decode divide.

As revealed by NVIDIA only back in September of 2025, Rubin CPX would be a GDDR7-backed Rubin GPU that would go into Rubin Vera NVL72 racks to handle the decode phase of token generation – the same role that Gorq’s LPUs are being employed for now.

When asked about the future of Rubin CPX in a press Q&A session, NVIDIA’s answer more or less discounted Rubin CPX entirely. According to company representatives, NVIDIA is focusing on integrating LPUs (and the LPX rack) into the Vera Rubin platform to optimize decode, and that is it.

To be sure, NVIDIA has never officially declared Rubin CPX dead. Still, for as quickly as it was introduced, it has quickly become an apparent afterthought for NVIDIA, as they have decided to hitch the future of decode acceleration onto their recently acquired Groq LPU technology instead. Regardless, the end result is that Rubin CPX is noticeably absent from this year’s GTC.

Final Words

This is one of the more exciting announcements. NVIDIA has a new accelerator and has shown its willingness to get into a heterogeneous mix of silicon, even for running AI models. On the competitive front, for companies building custom silicon based on data-flow engines, NVIDIA now has a solution in that space. This is not a low-cost solution for running the largest models. Instead, it is being used as a point solution to accelerate a high-value workload and keep the GPUs doing what they do best. This is a big shift for NVIDIA, and it will be exciting to see how it evolves in future generations.