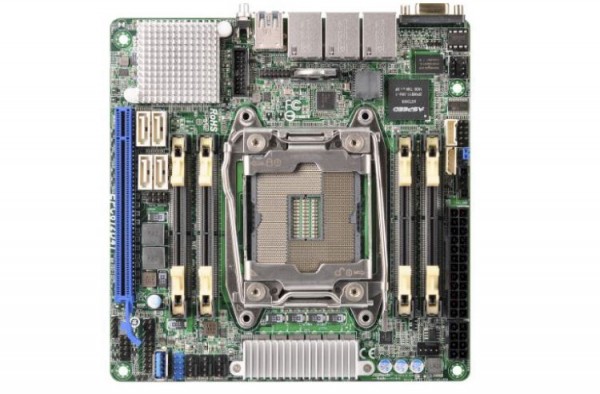

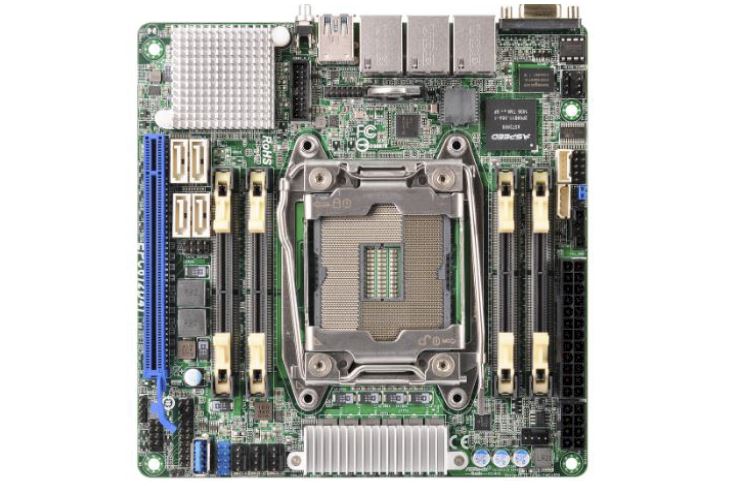

A few weeks ago we got word of a new motherboard coming from the ASRock Rack team. The ASRock Rack EPC612D4I was officially released today. The company has taken the large Intel LGA 2011-3 socket and crammed it on a mITX motherboard. The net result is extremely high density compute. ASRock Rack did make some trade-offs in this design but it may prove to be one of the most unique mITX propositions out there. We should be getting our sample soon along with some DDR4 SODIMMs to test with.

In terms of I/O the ASRock Rack EPC612D4I has two Intel LAN ports, one Intel i210 and one Intel i217 (the latter used to save space since it requires a smaller PCB footprint) and a Realtek IPMI/ out-of-band management port. ASRock Rack still fits a standard VGA connector on the backplane as well as two USB 3.0 ports in addition to the internal Type-A connector.

The ASRock Rack EPC612D4I has four SATA III ports and a PCIe 3.0 x16 slot for storage/ networking I/O expansion. Certainly in such a small form factor, fitting 10 SATA ports and 40 lanes of PCIe 3.0 is not possible. One can also see the narrow ILM cooling module and four DDR4 SODIMMs are used to keep space low. Right now DDR4 SODIMMs are still rather difficult to find. Case and point, here is an Amazon search for DDR4 SODIMM which does not turn up any DDR4 results as of 29 April 2015.

ASRock Rack EPC612D4I Quick Specs

- Mini ITX 6.7″ x 6.7″

- Socket LGA 2011 R3 Intel Xeon processor E5-1600/2600 v3 series

- Supports Quad channel DDR4 2133/1866 ECC DIMM, 4 x SO-DIMM slots

- Support 4 SATA3 by C612

- Supports 1 x PCIe 3.0 x16

- Integrated IPMI 2.0 with KVM and Dedicated LAN (RTL8211E)

- Supports Intel Dual GLAN ( Intel i210 + Intel i217 )

Overall, the mITX space is getting much more interesting with the addition of the big Xeon E5 cores coming in this motherboard and Xeon D motherboards. Needless to say we have high expectations for the ASRock Rack EPC612D4I when it arrives in the lab and data center.

No 10 GbE? What a disappointment!

My thoughts exactly. Onboard 10Gbe would give you the option to install an HBA in the PCI-E slot.

Why do you need a HBA?

Just ditch out your creepy FC SAN and take an Intel XL710-QDA2 with 2x 40 Gbase Converged Ethernet.

So you get 40 Gbit/s single-link performance and 64 Gbit/s total bandwidth (limited by PCIe 3.0 x8) which is more than a Dual FC16 + Dual 10 GbE can do.

He said HBA, not HCA. :)