In 2020, Arm is making rapid advancements in the server space well beyond what we have seen in recent years. Aside from the obvious hardware advancements (which we will get into later in this article), the biggest piece of the puzzle here is arguably the software ecosystem maturity. Many workloads can now more or less seamlessly transition from running on traditional x86 hardware to running on Armv8 metal. In this article, we are going to explore the Arm opportunity at cloud service providers or CSPs.

Note on naming conventions: throughout this article, we will use x86 as a shorthand for x86_64, which is also known as amd64. In this context, it means traditional, Intel-compatible hardware. Armv8 stands for the – relatively – new Arm architecture, outed by Arm Holdings in 2011, and also capable of 64-bit memory addressing. Armv8 is also sometimes known as AArch64 or arm64. We need to keep things simple and standardize.

Arm software ecosystem maturity

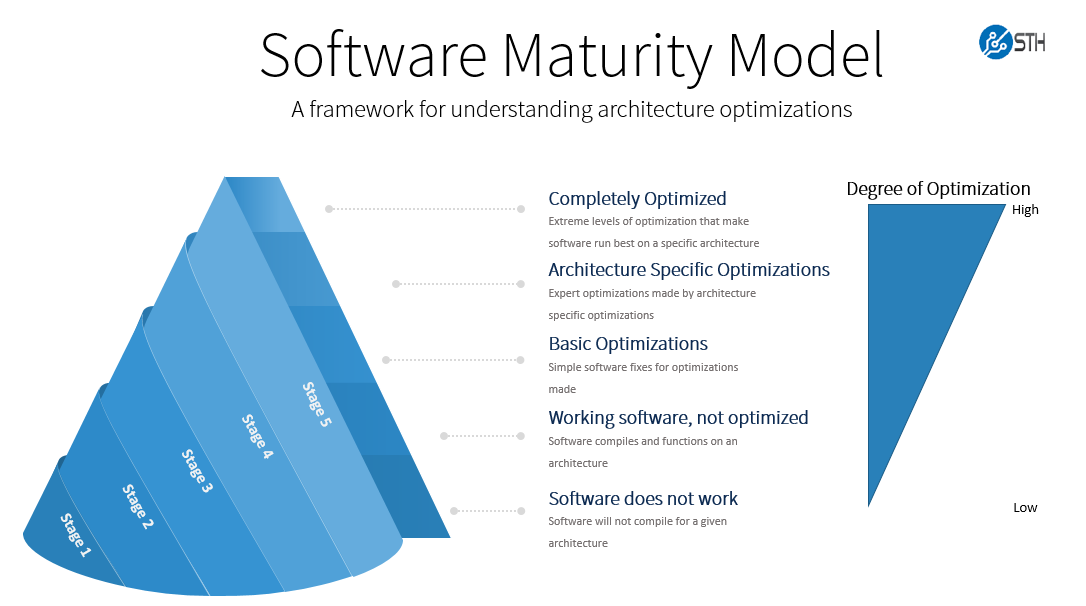

Hardware and software engineers often see their jobs as different, but in order to bring about new architecture, better software means better investment in hardware and better hardware means more are willing to invest in software. Having an Arm server hardware discussion necessarily means that we need to discuss the software side. Indeed, that has been perhaps the most visible area of advancement over the past half-decade.

Arm’s software ecosystem has not hit an x86 level of maturity despite what many of its promoters argue. In many cases there may still be a bit a software engineering required, like tweaking compilation flags or container configuration files. In turn, these small tweaks often mean incremental testing effort. As a result, swapping from x86 to Arm has a switching cost. It may be a small cost, but the swap is usually non-zero. While closed source software is another story, open-source software underpinning popular workloads at CSPs, is rapidly gaining maturity. For example nginx a popular web server has done a great job developing its Arm solution.

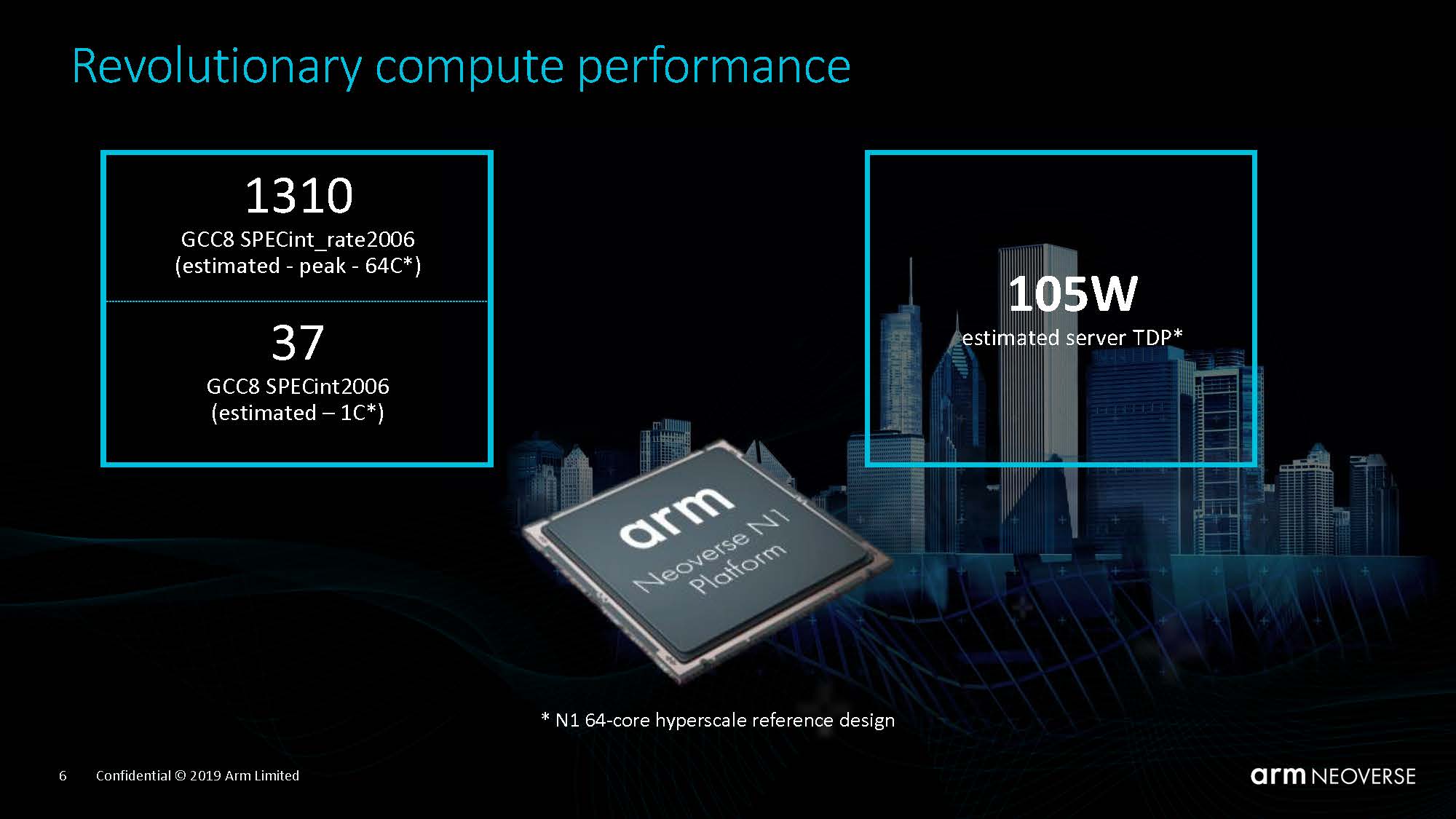

Armv8 software maturity has come a long way in the last few years. All popular Linux distributions like Debian, Ubuntu, RHEL, Fedora, Centos, and OpenSUSE now offer an Armv8 spin just as naturally as they offer a traditional x86 spin. This was not the case a few years ago. We have already covered this topic in February of last year when Arm launched its Neoverse line of server CPU cores. Other vendors are moving this direction as well as we are seen even legacy platforms such as VMware ESXi venture to 64-bit Arm support.

A second very important milestone in the Arm software ecosystem came last year when NVIDIA announced support for CUDA-on-Arm at SC19. This was essentially a very potent signal sent to all the industry that Armv8 was soon to be considered on par with x86 for running GPU-accelerated workloads. AI is a pioneering workload, in the field. NVIDIA offering its full AI stack on Arm to build parity with x86 is a big deal since it is an offering for the future of compute, not the past.

New Armv8 hardware changes the game

Even though having software that ‘just works’ is essential, one also needs hardware. And in this regard, the Arm world certainly does not disappoint and is as vibrant as ever. Three main actors stand out: Ampere Computing, Marvell, and Amazon’s AWS. (Huawei in China as well, although we are going to set that aside for now.) You will of course immediately note that if the first two are CPU vendors, the third one is a Cloud Service Provider (CSP) or a traditional Intel Xeon customer.

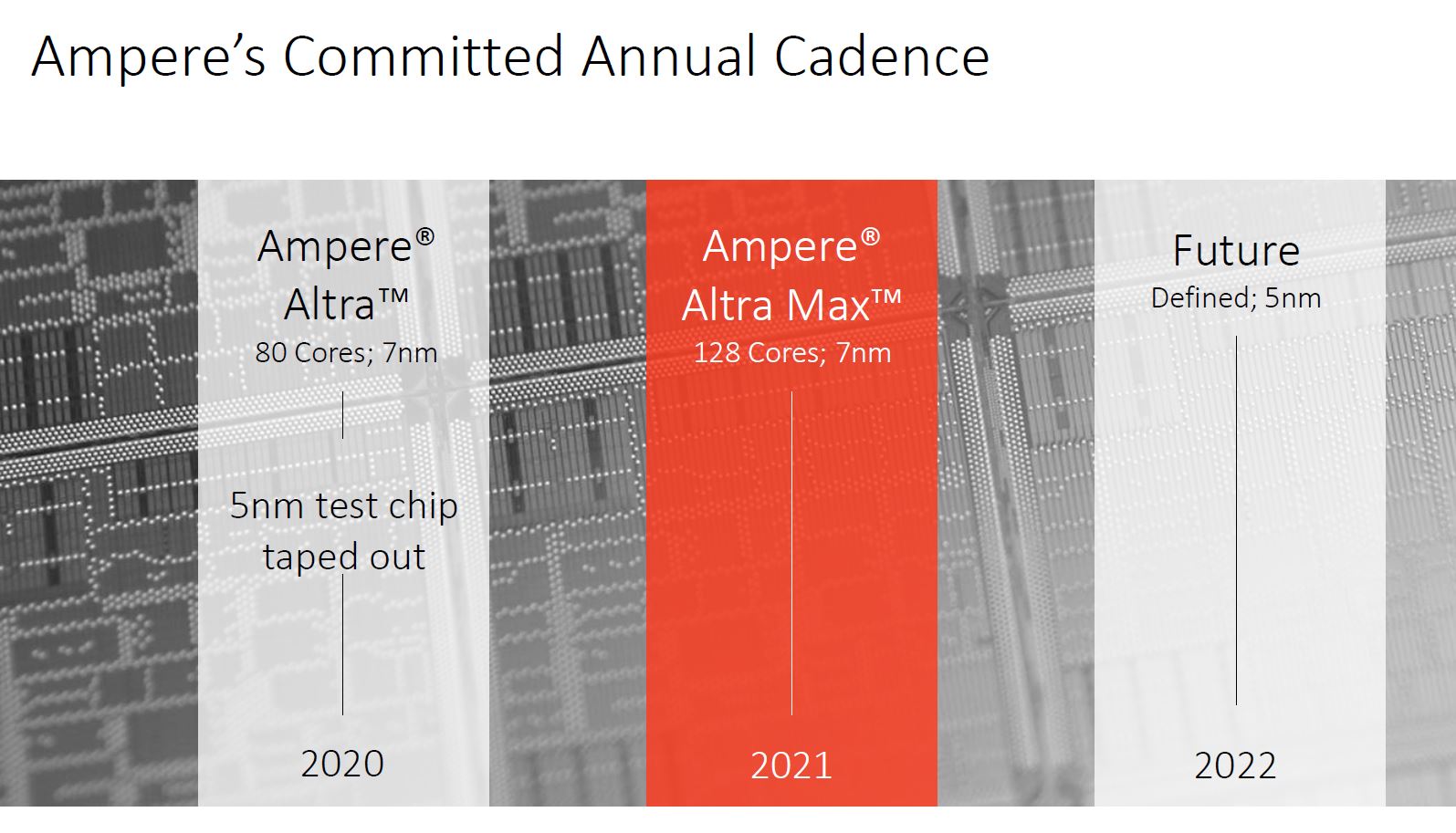

Ampere Computing has already had its 7nm Altra sampling for a few months now. As a reminder, it is certainly a solid 2P capable offering on paper, with up to 80 N1 cores at up to 3.3Ghz, 8 DDR4-3200 channels, and 128 PCIe Gen4 lanes (192 in a 2P configuration), all for a TDP of up to 250W. Ampere Computing has already announced next year’s follow up, the Altra Max, which stays on 7nm and pushes the core count to 128. 2022 will see the company outing its 5nm Siryn follow-up.

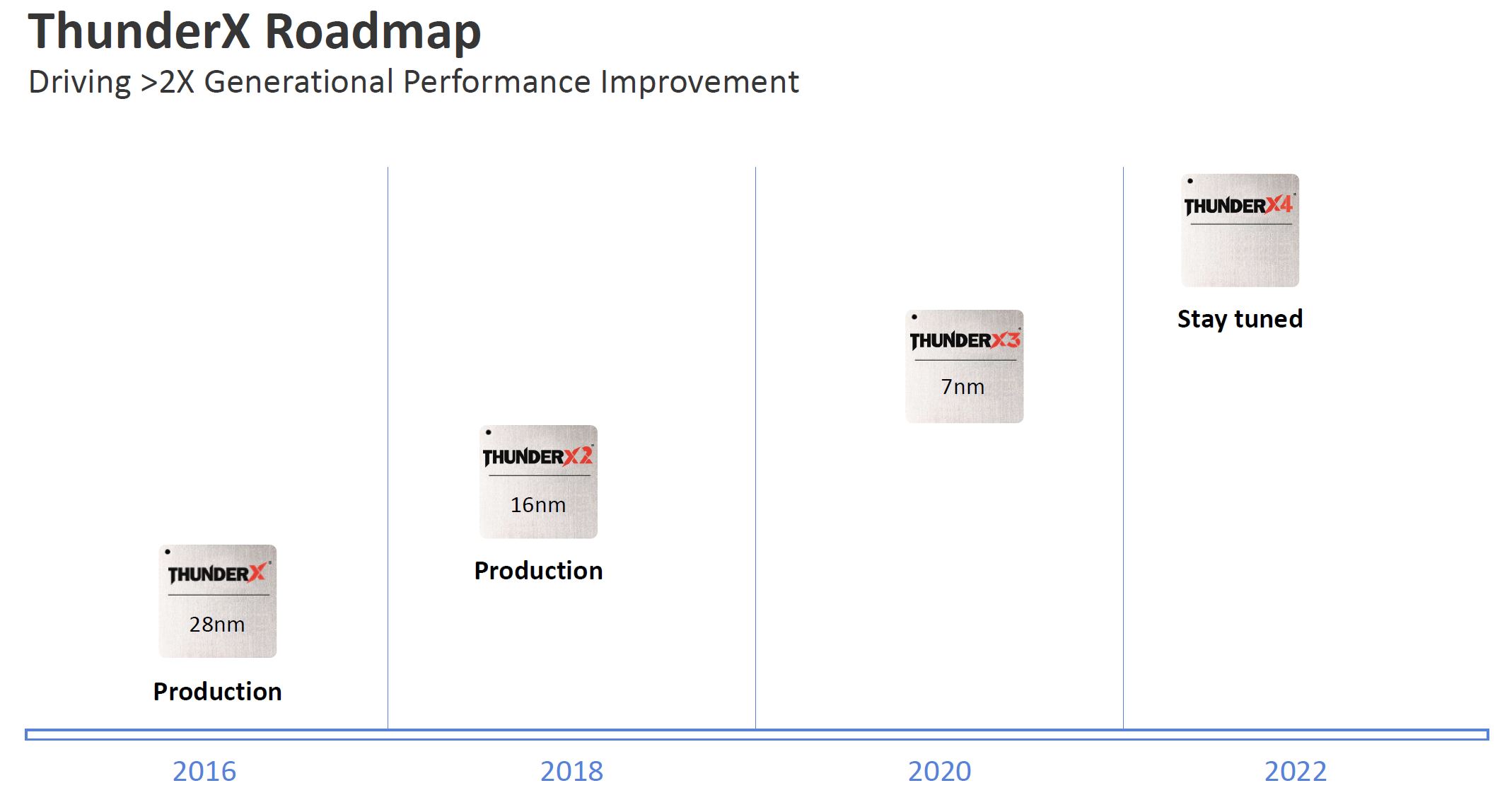

Marvell, for its part, finalized its acquisition of Cavium in 2018, has arguably been the first Arm CPU vendor to properly enter the data center market, and we have covered the ThunderX2 extensively since its 2018 launch and the ThunderX before it. Marvell’s counterpart to the Ampere Altra is the ThunderX3, to become available this year. It is also a 2P capable 7nm part with eight DDR4-3200 channels but limited to 64 PCIe Gen 4 lanes per CPU (128 in a 2P configuration.) Where it differs greatly from Ampere’s offering is in the choices of CPU cores. Instead of going for “off the shelf” Arm N1 cores, Marvell is using custom Arm cores, offering 4-way Simultaneous Multi-Threading (SMT). The ThunderX3 will be offered with up to 96 cores (and thus 384 threads) per socket. This is a big differentiating point for Marvell. Just like everyone else, Marvell has a roadmap, and a ThunderX4 is slated for 2022. It will likely be a 5nm part.

August 18 2020 Update: at Hot Chips 32, Marvell gave more details on its ThunderX3, and revealed that in its high core count configuration it is indeed a dual die solution: each single die supports up to 60 cores, and up to 96 cores in a dual die configuration. At the end of the day, it doesn’t change the high level specifications (up to 96 cores per socket, and 2P capable), but it is interesting to note that Marvell is already going for a multi die package for this generation. Ampere, for its part, will wait for the 5nm generation (2022) to abandon the monolithic die approach.

And then there is Amazon. Having bought the Anapurna Labs CPU design house in 2015, and after testing the waters with a first-generation Graviton at the end of 2018 (and selling its Arm chips for applications such as switches), Amazon announced the general availability of its Graviton2 based instances in March of 2020. Just like the Ampere Altra, the Gaviton2 is also a Neoverse N1 based design, manufactured at 7nm. It is a single socket design, offering 64 cores, 8 DDR4-3200 channels, and 64 PCIe Gen 4 lanes. The fact that AWS decided to build its own CPUs is important because a traditional Xeon/ x86 customer is now taking that in house. It also allows software development to happen at a more rapid pace by showing strong backing for Arm infrastructure, offering it to a wide array of customers. The industry is changing, and long gone are the days when only hardware vendors could decide its fate.

| Ampere Computing | Marvell | Amazon AWS | |

| CPU name | Altra | ThunderX3 | Graviton2 |

| Instruction Set | Arm v8.2+ | Arm v8.3+ | Arm v8.2+ |

| Manufacturer and node | TSMC 7nm | TSMC 7nm | TSMC 7nm |

| 2P capable | yes | yes | no |

| nb of cores | up to 80 | up to 96 | 64 |

| cores | Arm N1 | custom | Arm N1 |

| SMT | no | 4-way | no |

| CPU base clock (2) | (1) | 2.2Ghz | 2.5Ghz |

| CPU turbo clock (2) | up to 3.3Ghz (1) | up to 3Ghz | N/A |

| L3 cache | 32 MB | ? | 32 MB |

| L2 + L3 cache | up to 112 MB | ? | 96 MB |

| memory controller | 8x DDR4-3200 | 8x DDR4-3200 | 8x DDR4-3200 |

| memory support in 1P | 4 TB | probably 4 TB | 2 or 4 TB? |

| PCIe Gen4 in 1P | 128 | 64 | 64 |

| PCIe Gen4 in 2P | 192 | 128 | N/A |

| TDP | up to 250W | up to 240W (2) | ? |

(1) Ampere’s documentation calls this “sustained turbo”, but presumably this can be treated as a kind of base clock, as all cores should be able to sustain said clock while fully loaded. To be confirmed in STH testing.

(2) These specifications are to be confirmed as the chips become commercially available and may change as the parts transform into shipping products.

Looking at the above table, a few things come to mind. First of all, the industry as a whole seems to be settling on a few “standard” specifications. Each of these three chips comes equipped with a memory controller supporting eight channels of DDR4, just like AMD’s EPYC line and Intel’s upcoming 10nm Ice Lake Xeon line. Likewise, 250W+ seems to be to the new upper bound for acceptable maximum TDP per socket. A quick look at AMD’s and Intel’s 2020 shipping and roadmap offerings confirms this. Arm chips are looking to keep core counts high and TDPs low.

Amazon designed the Graviton only for internal use, it is a simpler design focused on 1P operation. Note that Ampere Computing and Marvell opted for different solutions enabling 2P operation: Ampere uses 32 (CCIX enabled) PCIe Gen4 lanes per processor to enable communications between the two sockets, whereas Marvell uses a dedicated interface. Enabling 2P operation complicates the design quite a bit, and as it does not have to cater to any CPU buyer’s needs but its own, Amazon went for simplicity here. This may of course change with a future generation. It also, in some ways, validates AMD’s vision for single-socket EPYC.

Next, we are going to explore this decision of off-the-shelf or custom Arm CPU cores.

And then comes RISC-V. Or venerable POWER. Both open architectures now.

I believe that when ARM/POWER/whichever else architecture releases true PC class machines for developers to use, then x86 will clearly see the most major threat that it has ever encountered. At the moment, real developer machines for these kind of architectures either do not exist or are hard (or very expensive) to buy. Raspberry Pi – style of computing can go only this far. But two major things are coming, the first from Apple and the second from AWS or other cloud providers. For the former, I am not really sure how big the impact will be because of the quite closed nature of said machines – however if Linux can be loaded to an ARM-based Macbook or Mac Pro then it is going to be a very serious step forward. For the latter, I believe that it is a half-step, however an important one as it creates a market demand for ARM-software solutions and provides incentives to developers.

@Andreas

Yeah, Apple going ARM isn’t going to move the needle much since MacOS doesn’t really show up in the datacenter, and Linux doesn’t support Apple hardware very well.

I agree, desktop ARM boards are an important evolutionary step. x86 and Linux snuck into the datacenter via cheap, good enough desktop boards. Operations folks got comfortable running x86 Linux servers for their own personal stuff.

It still remains to be seen if ARM will create a solid, modular platform like x86.

X86 is x86 is x86. The x86 ecosystem is all fairly symmetric, and x86 is built for horizontal integration.

The ARM ecosystem on the other hand has been an asymmetric mess, which is kind of it’s strength, and the ARM ISA is designed for vertical integration.

If the ARM vendors can’t deliver an x86 like experience, the ARM platform is only viable for customers willing to burn money on vendor locked hardware, or CSPs like Amazon, Microsoft, or Google who have custom technology stacks they rent to people.

KarelG

RISC V is a novelty – that’s all and no one outside of an already IBM shop will even consider POWER.

World will be X86_64 for the foreseeable future – ARM will remain niche.

Perhaps the shift to ARM with MacBooks helps, if enough developers use them. I think it will be a major problem for developers when for example their containers stop working or just run awful slow due to emulation.

Without a good ARM laptop running Linux (or Windows even), I think ARM is facing an uphill battle.

In the early ’80s, PC was a novelty (touted by DEC, Wang, IBM themselves). In the early ’90s, Linux was just a hobby (according to Linus himself). At the turn of the century, Amazon and cloud computing were novelties and social media was a novelty for teenagers to have a little fun. I wonder if RISC V a novelty, then would it repeat a similar history?